I have a computer science degree. I work in IT, and have done so for many years. In that period “classical” computers have advanced by leaps and bounds. I remember teletypes and paper tape, and punched cards too. I also remember when a top-notch disk drive was the size of a washing machine and the cost of a car. It provided a miserly 10 megabytes of storage. My disk drive today is the size of my wallet and cost £46.99. It provides a terabyte of storage. It’s currently somewhere in my bedroom drawer amongst my socks. We have a computer in every bedroom, plus tablets, consoles, and much much more. My phone has more processing power than NASA had when they put a man on the moon. It’s pretty much the same for everybody I know. We have phenomenal computing power at our fingertips. We have the internet. Computers have revolutionized our lives. They have changed our lives for the better.

Quantum computing hasn’t changed our lives for the better

But quantum computing hasn’t changed our lives for the better. Moreover, it looks like it’s going to stay that way. Quantum computing has been around now for nearly forty years, during which time real computing has left it in the dust. See the timeline section of the Wikipedia quantum computing article, and ask yourself where’s the parallel adder? Where’s the equivalent of Atlas, or the MU5? I went to Manchester University, see the history on the Manchester Computers article on Wikipedia. Quantum computers don’t show similar progress. Au contraire, they haven’t even got off the ground. You won’t be buying one in PC World any time soon. Yes, you hear about things like the D-wave quantum computer, which costs fifteen million dollars. It’s a black ten-foot cube, like something out of a science fiction movie:

D-wave computer and co-founder and CTO Dr Geordie Rose, image credit D-wave

D-wave computer and co-founder and CTO Dr Geordie Rose, image credit D-wave

But you also hear about the skepticism. People ask if it’s really a quantum computer. See for example Will Bourne’s January 2014 article D-Wave’s Dream Machine. It includes sentences like this: “He believes scientific rigor and transparency are being clouded by Rose’s commercial ambitions and “hype”, and he fears that Rose’s overreach could tarnish the entire field of quantum computing research, setting it back years”. Also see James Vincent’s 2016 article Biggest ever quantum chip announced, but scientists aren’t buying it. He says this: “A study published in Science in 2014 found that tasks performed on the company’s machines were no faster than conventional computers”.

Report cools down quantum computing hype

Michael Biercuk wrote an interesting article in August 2017 called Hype and cash are muddying public understanding of quantum computing. Another interesting article was The Argument Against Quantum Computers dating from February 2018 where Katia Moskvitch interviewed Gil Kalai. He said qubits in superposition will inevitably be corrupted by noise, and getting the noise down is a showstopper of a fundamental issue rather than just a mere matter of engineering. Yet another interesting article was Report cools down quantum computing hype written by Katherine Bourzac in December 2018. She referred to a National Academies report and said this: “A report released Dec, 4 by the National Academies of Sciences, Engineering, and Medicine throws some cold water on the hype smouldering around quantum computing”.

We’re not any closer

There’s scepticism for quantum computers even when they come from a company like IBM. See the January 2019 Wired article by Amit Katwala, who said IBM’s quantum computer is important, but it’s far from ready. The physical structure of the IBM Q System One was designed by Map Project Office, an industrial design consultancy. It’s been deliberately designed to look impressive. That’s why it’s a cross between a supercomputer and a brain in a jar, all in a nine-foot crystal cube:

IBM Q System One image from IBM, see reportage at Serve the home

IBM Q System One image from IBM, see reportage at Serve the home

Yes, it’s great presentation, and a captivating aesthetic. But note this: “A 20-qubit system is unlikely to be practically useful, says Robert Young, director of the Lancaster Quantum Technology Centre”. Also see Why Experts Are Skeptical of IBM’s New Commercial Quantum Computer by Ryan F Mandelbaum. He quotes Andrew Childs from the University of Maryland saying this: “figuring out how to make a lot of low-noise qubits is a lot more important than figuring out how to put them in a beautiful package”. Mandelbaum ends up saying that despite IBM’s flashy announcement, we’re not any closer to having a broadly useful, error-corrected quantum computer.

A 64-qubit quantum computer is not like a 64-bit computer

I empathize with the sentiment because there’s something they don’t usually tell you about in the popular press. They’ll talk about a 64 qubit quantum computer as if it was something like a 64-bit classical computer. But see the Wikipedia article on 64-bit computing. It’s “the use of processors that have datapath widths, integer size, and memory address widths of 64 bits (eight octets). Also, 64-bit computer architectures for central processing units (CPUs) and arithmetic logic units (ALUs) are those that are based on processor registers, address buses, or data buses of that size”. A 64-qubit quantum computer is nothing like that. It merely has 64 qubits at its heart. It takes 4 bits to make up one hexadecimal character, so we need 64 bits for 16 hex characters, like this: FFFF FFFF FFFF FFFF. The IBM Q System One has only 20 qubits. In hex terms that’s FFFFF. It really isn’t much.

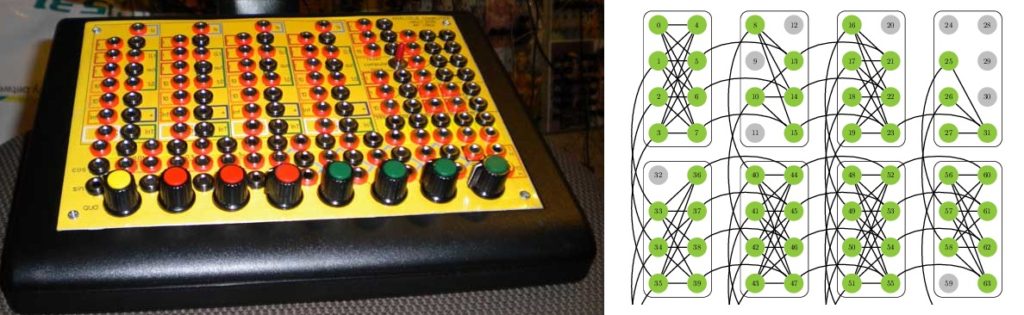

Analogue computers

That’s not to say all ongoing work in the field of quantum computing must be useless. People have complained that D-wave are only making analogue computers rather than real quantum computers. But as a person who takes a “realist” approach to physics, I don’t have a problem with that. Particularly since analogue computers can be very powerful, and very useful.

Analogue computer and D-wave lattice, see pinky on SDIY and Quantum annealing with more than one hundred qubits

Analogue computer and D-wave lattice, see pinky on SDIY and Quantum annealing with more than one hundred qubits

A slide rule is an analogue computer, we used them to design atom bombs before calculators were around. They did the job. An abacus did the job in China for a couple of thousand years. Other analogue computers used water and did the job. For example, if you wanted to find a path through a maze, you could pump water into the entrance and throw in some coloured dye. As the water escapes from the exit, the dye will trace out the path through the maze. Other analogue computers used electronics to do the job, such as for fire control in a battleship. So I wouldn’t rule out all of the engineering and manufacturing work that’s been ongoing. Especially since the nitty gritty stuff can deliver a serendipitous spinoff, such as the transistor.

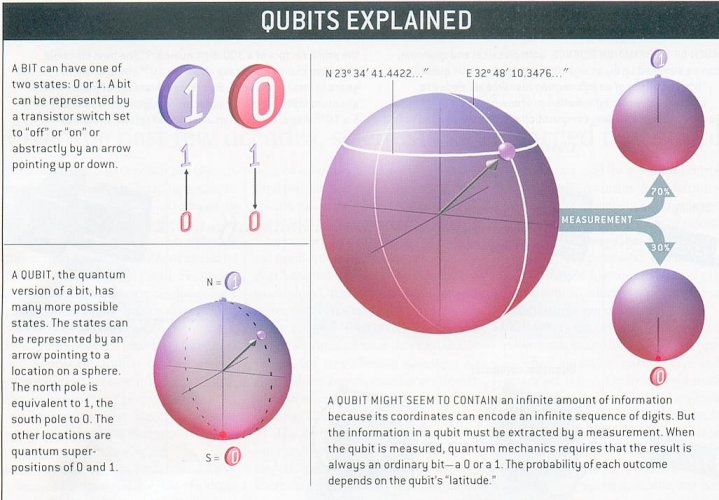

Fundamental physics raises fundamental issues

I’d say the real issue is the fundamental physics. Take a look at the Wikipedia qubit article. It says this: “A qubit is a two-state (or two-level) quantum-mechanical system, one of the simplest quantum systems displaying the peculiarity of quantum mechanics. Examples include: the spin of the electron in which the two levels can be taken as spin up and spin down”. The article also says quantum mechanics allows the qubit to be in a coherent superposition of both states simultaneously, a property “which is fundamental to quantum mechanics and quantum computing”. However you could make the same claim about a vector pointing North East. It points North, and it points East:

Qubit image from The Future of Computing – Quantum & Qubits by Sam Sattel autodesk

Qubit image from The Future of Computing – Quantum & Qubits by Sam Sattel autodesk

Then if you’ve read up on the history of physics and know about the electron and the wave nature of matter, you know that superposition is just a wave phenomenon. We can make electrons and positrons out of photons in gamma-gamma pair production, and we can diffract electrons. See the Wikipedia article on the superposition principle, which has a section on wave superposition. Also see the article on the Heisenberg uncertainty principle, which is “inherent in the properties of all wave-like systems”. An electron is a wave-like system. How are you going to leverage a supercomputer out of a modest number of wave-like systems with an indeterminate state? I’ve read about the history of quantum mechanics, and I do not believe in magic. So it feels to me as if the fundamental physics raises fundamental issues here. I just can’t see how quantum computing is ever going to work.

Is spookiness under threat?

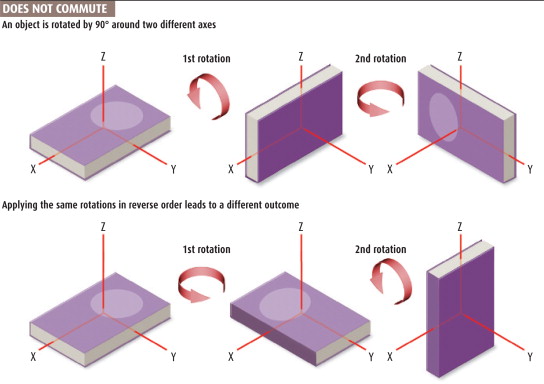

My attitude is coloured by a 2007 New Scientist article written by Mark Buchanan called Quantum Entanglement: Is Spookiness Under Threat? He referred to a paper by Joy Christian entitled Disproof of Bell’s Theorem by Clifford Algebra Valued Local Variables. In essence Christian argued that John Bell got his famous theorem wrong because he assumed that hidden variables commute. That’s because experimentally observed values +1 and -1 look like they commute. However Bell didn’t consider rotations, which do not commute. For rotations, the result depends on the sequence:

Rotations image from New Scientist

Rotations image from New Scientist

The article says this: “In 1843, the Irish mathematician William Rowan Hamilton found a way to capture this non-commuting property in a set of number-like quantities called quaternions. Later, the English mathematician William Clifford generalised Hamilton’s quaternions into what modern mathematicians call Clifford algebra, widely considered the best mathematics for representing rotations. So convenient are quaternions that they are commonly used in computer graphics and aviation”. This is William Kingdon Clifford, the man who came up with space theory of matter. The man who said “I hold in fact: (1) That small portions of space are in fact of a nature analogous to little hills on a surface which is on the average flat; namely, that the ordinary laws of geometry are not valid in them”. I’m confident enough about that because of Schrödinger’s 1926 paper, where on page 26 he talked about light rays showing remarkable curvature and getting into a small closed path. He was talking about the electron, and I know that electron spin is real. See Hans Ohanian’s 1984 paper what is spin? He said “the means for filling the gap have been at hand since 1939, when Belinfante established that the spin could be regarded as due to a circulating flow of energy”.

Gif courtesy of Adrian Rossiter’s torus animations, S-orbital image from the 2010 Encyclopaedia Britannica

Gif courtesy of Adrian Rossiter’s torus animations, S-orbital image from the 2010 Encyclopaedia Britannica

An electron goes around and around in a uniform magnetic field because it’s a dynamical “spinor”. The spin is a compound spin ½ rotation, but it’s hidden because the electron looks like a standing wave. So we have a hidden variable which is a rotation. Which makes it clear to me that Christian was essentially correct. What’s not to like?

Burn the heretic

Buchanan’s New Scientist article said “twenty years ago, it was heretical even to raise such an idea”. It seems it still is, because Christian received some awful opprobrium. From the likes of Scott Aaronson, a vocal advocate of quantum computing. See Aaronson’s 2012 blog post entitled I was wrong about Joy Christian. It is unpleasant. There are other similar posts. Christian’s refutation of Aaronson’s claims makes for interesting reading. See section D where he talks about Aaronson: “His campaign involved mockery, defamation, incitement, name-calling, cyber-bullying, cyber-mobbing, and various other forms of intimidation tactics and ad hominem attacks, rationalized by reiteration of some incorrect criticisms of my argument previously advanced by others”. It would seem that Aaronson thought Christian’s physics was some kind of threat to his quantum computing livelihood. Christian also says “The purpose of his shaming campaign was not just public humiliation and discrediting of my research, but, in his own words, also to starve me off by cutting off my financial and academic supports, thereby thwarting my ability to continue my work”. Apparently this is fine by the University of Austin at Texas. It would seem that academics think it’s perfectly acceptable to hurl insults and impose censorship. It would seem that that’s the way they are, and have been for years. It’s the modern equivalent of burn the heretic. Interesting reading indeed.

How Space and Time Could Be a Quantum Error-Correcting Code

Something else that’s interesting reading is How Space and Time Could Be a Quantum Error-Correcting Code. It was written by Natalie Wolchover, and appeared in Quanta magazine in January 2019. It makes interesting reading for a very different reason: it’s extremely hypothetical. It starts by saying this: “In 1994, a mathematician at AT&T Research named Peter Shor brought instant fame to “quantum computers” when he discovered that these hypothetical devices could quickly factor large numbers – and thus break much of modern cryptography”. How do you “discover” what a hypothetical device can do? Especially when “qubits are maddeningly error-prone” such that “the feeblest magnetic field or stray microwave pulse” causes them to undergo bit flips or phase flips? The article gets even more hypothetical, because it says in 1995 Shor came up with a proof that “quantum error-correcting codes” exist. It quotes Scott Aaronson saying “This was the central discovery in the ’90s that convinced people that scalable quantum computing should be possible at all”. The problem is of course, that it isn’t a real discovery. It’s not like discovering America, or discovering penicillin.

A deep connection between quantum error correction and the nature of space, time and gravity

The article then gets yet more hypothetical: “But in the dogged pursuit of these codes over the past quarter-century, a funny thing happened in 2014, when physicists found evidence of a deep connection between quantum error correction and the nature of space, time and gravity”. Surely that’s so hypothetical it’s hyperbole? Whatever next? This: “In Albert Einstein’s general theory of relativity, gravity is defined as the fabric of space and time – or “space-time” – bending around massive objects. (A ball tossed into the air travels along a straight line through space-time, which itself bends back toward Earth). That’s wrong. That isn’t how gravity works. A ball tossed into the air doesn’t travel along a straight line through spacetime. Spacetime is a mathematical abstraction that models space at all times, so there’s no motion through it. Moreover spacetime curvature relates to the tidal force, not the force of gravity. Take a look at the room you’re in. The force of gravity is 9.8 m/s² at the floor and at the ceiling. So there’s no detectable tidal force, and so no detectable spacetime curvature. But your ball still falls down.

Quantum gravity is a castle in the air

Then comes some more hype, this time about quantum gravity: “But powerful as Einstein’s theory is, physicists believe gravity must have a deeper, quantum origin from which the semblance of a space-time fabric somehow emerges”. Who believes that? I don’t. I believe quantum gravity is a castle in the air. I also believe quantum electrodynamics works after a fashion because when the electron and the proton attract one another, they “exchange field” such that the resultant hydrogen atom has very little in the way of an electromagnetic field. You can mentally chop up this exchanged field into chunks or quanta and say each is a virtual photon. But note that the electron and proton move towards each other because of the screw nature of electromagnetism. Because they’re dynamical “spinors”. It’s something like the way counter-rotating vortices move towards one another. The motion does not occur because they’re exchanging particles. See the peculiar notion of exchange forces part I and part II by Cathryn Carson. Hydrogen atoms don’t twinkle, and magnets don’t shine. In similar vein Einstein made it clear that light curves downwards because the speed of light is spatially variable. Not because gravitons are flying around.

Lies-to-children about anti-de Sitter space

It gets worse, because next comes some lies-to-children about anti-de Sitter space. Wolchover says “three young quantum gravity researchers came to an astonishing realization”. She tells us “they were working in physicists’ theoretical playground of choice: a toy universe called ‘anti-de Sitter space’ that works like a hologram”. And that “the bendy fabric of space-time in the interior of the universe is a projection that emerges from entangled quantum particles living on its outer boundary”. Oh boy, it’s the holographic universe. And guess what? The holographic emergence of space-time works just like a quantum error-correcting code, and space-time itself is a code!

Absurd claims about spacetime

It gets even worse, because then we get absurd claims like this: “John Preskill, a theoretical physicist at the California Institute of Technology, says quantum error correction explains how space-time achieves its “intrinsic robustness,” despite being woven out of fragile quantum stuff”. Again, spacetime is a mathematical abstraction that models space at all times. So there is no motion in it, so these guys don’t understand general relativity. They don’t understand black holes either. Why? Because the article says this: “The language of quantum error correction is also starting to enable researchers to probe the mysteries of black holes: spherical regions in which space-time curves so steeply inward toward the center that not even light can escape”. That’s wrong. Light doesn’t follow the curvature of spacetime. It curves wherever there’s a gradient in the speed of light, which is also a gradient in gravitational potential. Like Don Koks the physicsFAQ editor said, the ascending light beam speeds up. In a black hole the ascending light beam doesn’t curve back round. Instead it doesn’t get off first base. That’s because black holes are places where the “coordinate” speed of light is zero. Not “paradox-ridden places where gravity reaches its zenith and Einstein’s general relativity theory fails”.

HaPPY code and Tinkertoys

Things go seriously downhill after that. Because we then get Juan Maldacena “discovering” that the bendy space-time fabric in the interior of anti-de Sitter space is “holographically dual” to a quantum theory of particles living on the lower-dimensional, gravity-free boundary. Again, this isn’t a real discovery. It’s a conjecture claiming that there is some miraculous equivalence between two other conjectures. Wolchover’s article then talks about the HaPPY code and Tinkertoys tiling space, wherein a black hole is defined by “the breakdown of correctability” and is “like a sink for your ignorance”. Oh, the irony. Then we get the black hole information paradox, “which asks what happens to all the information that black holes swallow. Physicists need a quantum theory of gravity to understand how things that fall in black holes also get out”. Yes, it’s like a sink for your ignorance, because these guys don’t know how gravity works, or why black holes are black. So they don’t understand the problems with Hawking radiation, or the information paradox, or firewalls. They don’t understand that there are gamma ray bursters, and that matter falls faster and faster because of the reducing speed of light. But what does Wolchover’s article say? Quantum error corrections stops firewalls from forming, and instead leads to a two-mouthed black hole called a wormhole! Doubtless they’re holographic too.

Grabbing the limelight

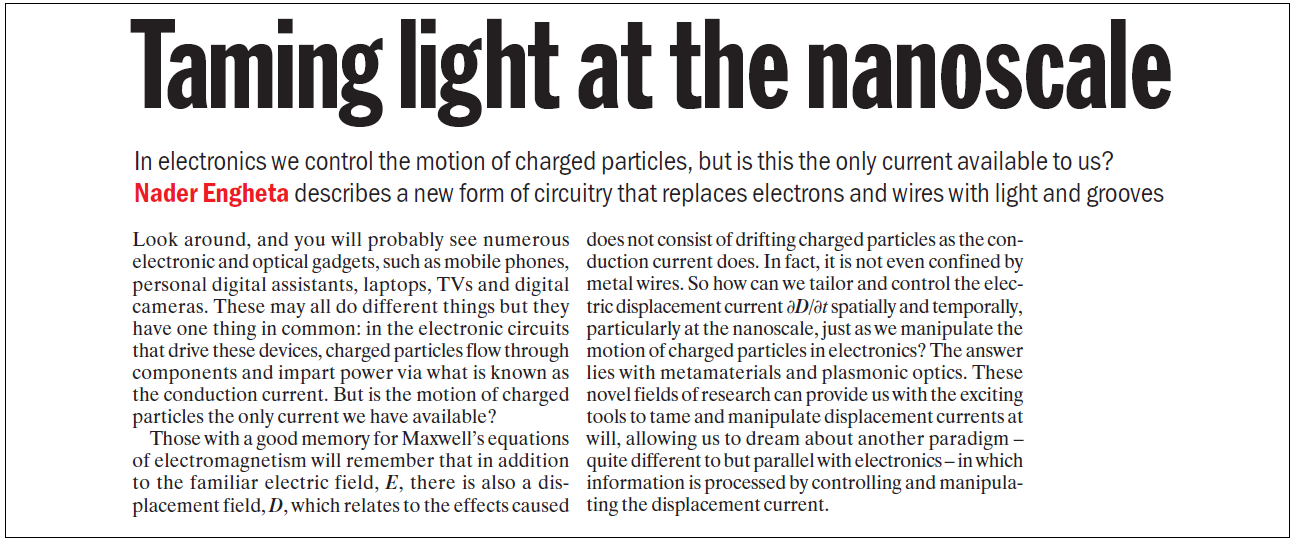

It’s all way too speculative. That’s not good. Especially since quantum computing is grabbing the limelight and leaving other things in the shade. Take a look at the 2010 physicsworld article taming light at the nanoscale. That’s where Nader Engheta talks about optical computing:

Fair use excerpt from taming light at the nanoscale

Fair use excerpt from taming light at the nanoscale

Optical computing is great science because displacement current is more fundamental than conduction current. Light is displacement current. In gamma-gamma pair production we convert light into an electron and a positron. Then when you move the electron, that’s conduction current. It’s much better to cut out the middleman and use the original light, which moves at c all on its own. Unfortunately when you look on the arXiv, you can see that Nader Engheta isn’t working on optical computing any more. I’m not happy about that.

The case against quantum computing

I’m not the only one who isn’t happy. See The Case Against Quantum Computing by Mikhail Dyakonov. He says things like this: “a useful quantum computer needs to process a set of continuous parameters that is larger than the number of subatomic particles in the observable universe”. Along with “I’m skeptical that these efforts will ever result in a practical quantum computer”. Also see The U.S. National Academies Reports on the Prospects for Quantum Computing by David Schneider. He says things like this: “as I read through various parts of the report, I repeatedly found justification for Dyakonov’s skepticism about the prospects for quantum computing”. Along with “This stands in contrast to the rosy picture of the field you’ll find in much of the popular press”. Also see the review of Scott Aaronson’s book by Guy Wilson. He says things like this “the book is simply a propaganda tool for the author’s fantasy”. Along with “he is dismissive, or even derisive of these arguments, for a very simple reason: His bread is deliciously buttered by the lucrative investments in the idea of quantum computers”. Last but not least, see Quantum computing as a field is obvious bullshit by Scott Locklin. He says things like this: “In 2010, I laid out an argument against quantum computing as a field based on the fact that no observable progress has taken place. That argument still stands. No observable progress has taken place”. Along with “Hundreds of quantum computing charlatans achieved tenure in that period of time”. Along with “How many millions have been flushed down the toilet by these turds?” Along with “I do feel sad for the number of young people taken in by this quackery”. I’m afraid to say I think he’s right.

There may be trouble ahead

I say that because I know about computer science, and about physics. I know enough to know that quantum computing isn’t science, it’s pseudoscience. It isn’t founded on physics, it’s founded on fantasy. It’s hype and hot air. It’s pie in the sky and jam tomorrow. It’s a tottering tower of unsupported conjecture atop a layer of specious speculation riding a raft of foundation-free hypothesis. Quantum computing isn’t just a waste of time and money. It’s like counterfeit money, the bad money which chases out the good. It’s been chasing out optical computing, and now it’s chasing out physics. Because now “quantum technology” is getting more and more funding. Even though it’s just Emperor’s New Clothes nonsense from a bunch of charlatans. Even though it’s just smoke-and-mirrors claptrap from a cabal of quantum quacks. Quantum quacks who have censored their critics with venom and bile. Quantum quacks who, with the help of their symbiotic hacks, have peddled enough snake oil and moonshine to persuade gullible politicians to give them the money. There may be trouble ahead.

Note 26/10/2019: there’s been stuff in the press this week about Google saying they’ve achieved quantum supremacy. I recommend you Google on quantum supremacy hype. I also recommend you read the Nature article and pay attention to this: “The team challenged its computer, known as Sycamore, to describe the likelihood of different outcomes from a quantum version of a random-number generator. They do this by running a circuit that passes 53 qubits through a series of random operations. This generates a 53-digit string of 1s and 0s — with a total of 253 possible combinations (only 53 qubits were used because one of Sycamore’s 54 was broken). The process is so complex that the outcome is impossible to calculate from first principles, and is therefore effectively random. But owing to interference between qubits, some strings of numbers are more likely to occur than others. This is similar to rolling a loaded die — it still produces a random number, even though some outcomes are more likely than others”. That’s no calculation. That’s rolling 53 loaded dice, then saying a real computer can’t calculate how they’ll turn up. When Google realise they’ve been conned, there will be hell to pay.

hi JD this is well researched and persuasive. Dyakonov is cited by few, found/ cited him years ago and think he has a hilarious yet undismissable style. dont agree with everything youre saying, feel QC is a nascent field that will evolve, much like chip fabrication century ago, but there are definitely some unreasonable expectations going on. this concept repeats a lot in our tech age and seems to be highly applicable to the field of QC. https://en.wikipedia.org/wiki/Hype_cycle

Thanks vzn. But give it time, and I’m confident you’ll think the way I do eventually. Especially if you get to the point wherein you appreciate that there is no “quantum magic”. When it comes to electrons, all we’re dealing with is electromagnetic waves in a closed-path spin ½ configuration. This isn’t just some hype cycle. Quantum computing isn’t like fusion. The fundamental physics just isn’t there.

so you believe in fusion viability but not QC? another way to view QC is that maybe its just the new generation of chip miniaturization. on some level its spectacular theory + experimental finesse that quantum spins can be harnessed for computation. this is now proven out and Dwave led the way with lots of near-unsustainable/realizable hype mixed in (surprise! we both lived thru dotcom era, and thats what startups do!). the scalability is the million or billion dollar question. scalability (tightly coupled with error correction issue) is now admitted to be a very difficult problem even by the proponents. eg even Preskill / NISQ concept. https://arxiv.org/abs/1801.00862

Yes, because fusion occurs in the Sun, and in H-bombs. It even occurs in the “star in a jar”. Google on Doug Coulter “star in a jar”. We don’t have similar precedents for quantum computing. Scalability isn’t the issue. Getting a quantum computer to work is the issue. It’s never going to happen, because quantum spins are just spins. There is no magic, and no frontier. Preskill said “The core of the problem stems from a fundamental feature of the quantum world — that we cannot observe a quantum system without producing an uncontrollable disturbance in the system”. But it isn’t true. Look at weak measurement. See https://physicsworld.com/a/in-praise-of-weakness/ and https://physicsworld.com/a/catching-sight-of-the-elusive-wavefunction/ featuring Aephraim Steinberg and Jeff Lundeen.

.

PS: I hope you don’t get into bother for this.

actually have long felt/ argued something like you do that weak measurement is fairly strong evidence for a realist theory of QM, and new research is increasingly going in that direction. (many refs to this in my blogs on QM.)

re your blog ref, rob the mod showed up immediately to warn about posting your blog to remind everyone of your persona non grata/ blacklisted status, but maybe a precedent has been set now that nobody is going to overly freak out with future refs either. you still have some secret admirers on the site. SE moderation can be heavyhanded at times and have skated on thin ice wrt that many times.

I’m not sure research is going in that direction, vzn. I was looking at the research section of Aephraim Steinberg’s website earlier, and it looks like he’s getting sucked into “quantum information”. Yes, rob showed up immediately because the moderators aren’t really moderators, they’re thought police. They turned a blind eye to abuse in chat and suspended me for a year for answering back, then encouraged mob downvotes and suspended me for a year for “low quality answers”. Then whilst I was suspended, they extended the chat suspension to 3,000 years. Now I’m allegedly a “name-caller”. That’s what happens if you fight your corner and challenge the lies-to-children. Not good.

cyberspace could be the ultimate in free speech but human foibles enter the picture. can relate, recently got banned from reddit machine learning and physics for posting related comments and links to my blogs on …. machine learning and physics. a bummer because theres no forum like it and often could get substantial spikes in traffic just from those comments. it seems like maybe the bigger forums are more hair-trigger in their moderation and “brook no protest”. much like SE mods. sometimes its a fine line and yes feel it sometimes borders on thought policiing/ control. on other hand there have been very many flags over the years in the room and they became a little paranoid, there is precedent for shutting entire chat rooms down (scifi). the SE “no nonmainstream physics” policy honestly sometimes seems incompatible with cutting edge research. years ago thought that maybe sharp ppl/ experts on SE would be interested in new advanced theories, but in many ways they are married to their textbooks. reminds me there is an old zen saying “in the beginners mind there are many possibilities, in the experts only a few”. think some of the challenge is building up alternative cliques/ groups/ communities. this is proven very difficult eg physicsoverflow case. have you heard of it?

I’ve heard of this:

.

Moat: It is hard to fill a cup that is already full.

Jake Sully: My cup is empty. Trust me..

.

All that “no mainstream physics” stuff is incompatible with science. If it was compatible with science, there would never ever have been any scientific progress. Science would still be telling you the Sun goes round the Earth. I even get accused of being non-mainstream when I give a dozen references to Einstein and the evidence, plus Irwin Shapiro, Ned Wright, and so on. What they really mean is “anything that challenges to what I say will be dismissed as non-mainstream, because I’m the self-appointed “expert”, and I will not have anybody saying I’m wrong”. The bigger sites are more likely to have a self-appointed “expert” taking this attitude, whilst peddling lies-to-children and pseudoscience. The situation is pretty grim. But not totally. Yes, I’d say pressure is building. The thought-police can stifle free speech in science on their own sites, but not everywhere.

Love your articles, keep up the good work, I look forward to the next! Your investigating a lot of the things I am and giving me more to check out so thank you. It has always amazed me that wave-particle duality is held up as the shiny cornerstone experimental example or “proof” for quantum mechanics and pair production is staring us in the face screaming hey look you can make “matter” out of light and nothing else!!

Why thank you, uncertainH. I am honoured. The next article is on the double-slit experiment. I finished it last night, and will polish it this evening. No many-worlds multiverse is required!

The other fiascos require a Physics Detective as well,

National Ignition Facility (DT Inertial Confinement Fusion Ignition)

Room Temp Superconductor

Density Functional Theory

I don’t think of fusion as a fiasco, Hermit. That’s because we can actually do fusion on a benchtop. Google on “star in a jar”. In similar vein we have low-temperature superconductors, so I don’t bear any ill will towards room temperature superconductors. As for density functional theory, I’m afraid I don’t know anything about it.

Laughlin and Winterberg said 10 years ago that DT ICF Ignition would be a sure-thing by now. Has not happened.

Perhaps Dr. Winterberg can leave a brief comment as to why not.

I emailed him.

please tell me where and in which context did I say this. F. Winterberg

The Release of Thermonuclear Energy by Inertial Confinement C 2010 World Scientific

12.1 What Kind of Burn. P.373

“While it is difficult to predict the future, some general observations can be made. The achievement of DT ignition and burn is assured in the near future, ….”

Duff, on a lighter note: if the brain in the jar belongs to Mr.Spock, then I will proceed to drink the koolaid; if it belongs to Dr. Sheldon Cooper then please throw me a life vest…….. or a new thinline Mac.

There ain’t no brains in any jars Greg. And no koolaid either. Just a pile of stuff about old experiments and papers and the conclusions you can draw from them. As for The Big Bang Theory, that’s a physics free zone. This isn’t.

The interesting thing about quantum computing is that the QC theorists (with VERY rare exceptions) are classical “computer scientists” with no previous knowledge or experience in quantum physics. Their vision of quantum mechanics is reduced to one notion: “entanglement”. The conventional attributes of QM, like the Hamiltonian, energy, wave function, and Schroedinger equation, are not in their vocabulary, which is reduced to “qubits, gates, entanglement, and errors”. Thus, my trivial statement that the general state of a system of N qubits is described by 2^N continuous parameters (the quantum amplitudes) came as a big surprise to them, and caused indignant reaction. What about checking the relevant textbooks?

Hi Mikhail. Can you give me some links to those indignant reactions? As for QC theorists, I see names like John Preskill who “made a name for himself by publishing a paper on the cosmological production of superheavy magnetic monopoles in Grand Unified Theories”. Only I know that there are no magnetic monopoles, just as there are no one-sided coins. As for the Natalie Wolchover article, words fail me.

Great stuff. We were very surprised when our lab made a big fuss about Q. I was asked to think about applications. I could only think that it might make a good random number generator, but until I read these blogs I was under the illusion that there was something fundamentally random going on there. Now it seems that we are just working with a complex dynamical system, so perhaps it’s not even going to be an exceptional tool for that. Perhaps yes there will be a use for it as an analog “computer” but I haven’t heard anything promising.

Thanks Alan. I think the thing is this: when you know about computers and about photons and electrons, you know hype and hot air when you see it. For now, politicians and funding bodies do believe the hype and hot air, so quantum technology is all the rage. But I don’t think it will last long myself. There’s more and more people voicing their skepticism, nobody is delivering anything useful, and the “science” isn’t based on any understanding of the fundamental physics. I think it will all be swept away when somebody delivers something that is based on an understanding of the fundamental physics.

Just read Joy Christian’s paper you cited. It is very readable and clear. No mumbo-jumbo, just the math. As you say, the Clifford Algebra makes a lot of sense when dealing with rotational forces like spin. He seems to have a good head of steam: http://einstein-physics.org/.

I’m not sure Alan. I think Joy was hit hard by the attacks. His institute isn’t anything like the Bohr Institute. Or the Perimeter Institute where he had a connection. I should meet up with him I suppose. Oxford is an easy drive from Poole. We’ve exchanged a fair few emails.

25 years of Shor’s algorithm.

Shor’s factoring algorithm (1994) was an outstanding mathematical result that started the whole field of quantum computing. The full algorithm can factor a k-bit number using 72k^3 elementary quantum gates. Thus factoring 15 requires 4608 gates operating on 21 qubits. This is far beyond current and (arguably) future experimental possibilities. D. Beckman et al, Phys. Rev. A54, 1034 (1996) introduced a “compiling” technique which exploits properties of the number to be factored, allowing exploration of Shor’s algorithm with a vastly reduced number

of resources. One might say that this is a sort of (innocent) cheating: knowing in advance that 15=3×5, we can take some shortcuts, which would not be possible if the result were not known beforehand. Using this trick, factoring of 15 and 21 was actually experimentally demonstrated, however no further progress was reported.

Thus it is premature to worry about breaking criptogrophy codes by quantum computing.

Recent IBM press release: The design of IBM Q System One includes a nine-foot-tall, nine-foot-wide case of half-inch thick borosilicate glass forming a sealed, airtight enclosure that opens effortlessly using “roto-translation,” a motor-driven rotation around two displaced axes engineered to simplify the system’s maintenance and upgrade process while minimizing downtime – another innovative trait that makes the IBM Q System One suited to reliable commercial use.

This machine has 20 qubits, thus it still will not be able to factor 15 by Shor. Instead: “Future applications of quantum computing may include finding new ways to model financial data and isolating key global risk factors to make better investments.”

Apparently, isolating global risks is much, much easier than factoring 15 …

LOL, Mikhail. The hype rumbles on. And on. And on. But people who’ve been promised jam tomorrow aren’t stupid. When it doesn’t come, their patience finally snaps. I think of it as something like the cables of a suspension bridge. They’re made of individual wires. We use acoustic monitoring to listen to the wires snapping. When it comes to quantum computing, I’m hearing a ping ping ping!

I would enjoy witnessing the crash within my lifetime…

I consider as ping ping the fact the IEEE Spectrum magazine with its 400 000 subscribers ordered me the article “The case against QC” (This title was their’s). See also their postscriptum: https://spectrum.ieee.org/tech-talk/computing/hardware/the-us-national-academies-reports-on-the-prospects-for-quantum-computing

Yep, good stuff Michel. Ping. Ping. Ping. One day the whole edifice will come crashing down.

Hi, John!

If I had a couple of billions to spare, and somebody would propose me to invest in quantum computing, I would say: OK, I would be happy to do this as soon as somebody shows me a working quantum device, however simple it may be. Let it be a primitive quantum calculator with the capacities of a 7-year old child. Its size might be huge, I wouldn’t even mind if it works only when plunged in liquid Helium, Just show me something quantum, but working!

But, if after 20 years of hype, billions of spent dollars, and daily promisses to revolutionize our world, you still have absolutely NOTHING to show (except theory), then I would prefer to wait…

I think you’d be wise to wait, Michel. Unfortunately Google weren’t wise. It will be fun to see the egg on their faces when they finally throw in the towel. Did you hear the latest hype about Google reaching quantum supremacy? What a cheap stunt.

John, OK I will follow your advice. Quantum supremacy – as we, the French say: merde alors! Have you noticed that the word “quantum” is now widely used to define something really good, unusual, awesome, exceptional, etc, like Quantum winter suite, Quantum women’s sailor, Quantum footbag shoes… Planck and Schroedinger would be quite surprized

You missed one out, Michel. You forgot about the Quantum Horseshit.

!!!

John and Michel,

You don’t have to wait a lifetime, perhaps a few months

I am far more computer scientist than theoretical physicist. I never got to Jackson level E&M 🙂

However, I know how to tinker and push and bring the enterprise down. This is cold fusion 2.0, except that something like “cold fusion” is real (and was “real” (reproduceable, no) even then, just an affront to the enterprise).

If you or anyone is game – it can be done. I will even promise a fair reward from the consulting work we could do.

https://docs.google.com/document/d/1ds14CBJzzUiYCzdGMwB6MBiopcg69_rhYjAd0LcSXsY/edit#

HI Navid. I want to make a difference, but I’m cagey of getting mixed up with the hydrino stuff. Besides, I’ve already said plenty about electron spin. See https://physicsdetective.com/the-electron/. The electron goes round in circles in a uniform magnetic field because spin is real.

Until spin can be calculated and visualized by every engineer – then electron spin will remain a weird quantum thing and not realizable by a practical physics based model.

Forget hydrinos – doesn’t matter – show how electron spin works in an exact model that explains all of the properties of the experiment – then the physics world will run with that. EVEN IF THE MODEL IS NOT EXACTLY CORRECT – if it explains the experiment perfectly and with actual things like current, angular momentum etc

This would be an intellectual trim-tab if you will.

Until spin can be calculated and visualized by every engineer – then electron spin will remain a weird quantum thing and not realizable by a practical physics based model. Your concept is correct but you can’t force change with concepts you need actual models.

Forget hydrinos – doesn’t matter – show how electron spin works in an exact model that explains all of the properties of the experiment – then the physics world will run with that. EVEN IF THE MODEL IS NOT EXACTLY CORRECT – if it explains the experiment perfectly and with actual things like current, angular momentum etc

This would be an intellectual trim-tab if you will.

Navid: I stuck something in your scrapbook. Feel free to rearrange it.

Thanks John. I wish I had time to focus on the physics – I’m more of a connector at this moment. We’re really focused on an energy transition at scale. The European Commission is now funding hydrino energy at up to $96mi this year https://twitter.com/EndOfPetrol/status/1192493195105583108 – I’m not sure how bureacrats figured this out. The EIB’s decision to end fossil fuel lending in 2021 maybe related.

Sorry Navid, but I don’t want to get mixed up with Randell Mills and his hydrino power. No more, please.

You have put me in a touch position because I am using your site to talk about foundations of physics, and this is with academic physicists. I am not starting them on theoretical grounds about QM etc, but showing them data (endofpetroleum.com/proof) and suggesting then suggest they read articles here. So this little note will serve as a guide. You are making a choice to stay in the well of knowledge you have, that’s fine – as long as everyone is clear on that.

Hi, Navid. I am kind of specialist in spin physics (in atoms and condensed matter). I do not know WHAT spin is, i.e. how to derive it from some more general not yet existing theory. I take it for granted, that electron, proton, and neutron have spins. One could pose a lot of similar questions: what is mass, charge etc, what is the origin of strong interaction?

I think that only some additional experimental facts can help. Meanwhile the consequences of the existence of charge, mass, and spin can be “calculated and visualized by every engineer”. It is a quantum thing, because the internal angular momentum of an electron is h/2 (h – Planck constant).

We move forward very slowly, by studying and trying to understand experimental facts, and this is the only way we have.

Michael,

I think you are unaware about the theoretical physicist Mills. Let me tell you something very straight – I’m going to assume you are older and have been beaten down to assume there won’t be a solution in your lifetime. As we get older we get conservative. Thus the statement, “science advances one funeral at a time.” (Max Planck)

It is not the time to be Yoda (old wise man with caution) it is the time to be Luke Skywalker (aggressive move fast). What you stated about intrinsic angular momentum is the old way of looking at it. We have a general model now. We should have tens of physicists working on it, poking holes in it. Instead we have TOTAL SILENCE.

You need to look at Mills work. Brilliantlightpower.com/theory

Due to the urgency of the matter – I am going to ask you to look at experiments – which definitively with zero doubt – prove quantum mechanics is wrong. If you find a flaw, please reach out to me immediately. Knowing what I know and having talked to perhaps 30 physicists, that is not going to happen. EXPERIMENTS OVERTURN THEORY.

endofpetroleum.com/proof

I am looking for a few good men or women physicists. I can incentivize action, if the benefit of being involved in something generational is not enough.

MIchael, you really need to read these articles:

.

https://physicsdetective.com/how-pair-production-works/

https://physicsdetective.com/the-electron/

https://physicsdetective.com/what-charge-is/

https://physicsdetective.com/the-screw-nature-of-electromagnetism/

https://physicsdetective.com/how-a-magnet-works/

https://physicsdetective.com/the-mystery-of-mass-is-a-myth/

https://physicsdetective.com/the-nuclear-force/

.

Spin is real. It’s a real rotation. An electron goes round in circles in a uniform magnetic field due to Larmor precession.

OK, I will read them

See my latest article for something briefer: https://physicsdetective.com/the-theory-of-everything/

Google’s “Quantum supremacy” reproduced by classical computer

November 23, 2020

Classical computers can efficiently simulate the behavior of quantum computers if the quantum computer is imperfect enough.

Viewpoint on:

Yiqing Zhou, E. Miles Stoudenmire, and Xavier Waintal

Phys. Rev. X 10, 041038 (2020)

What Limits the Simulation of Quantum Computers?

JOURNALS.APS.ORG

What Limits the Simulation of Quantum Computers?

Classical computers can efficiently simulate the behavior of quantum computers if the quantum computer is imperfect enough.

Meaning that Google’s “Quantum supremacy” has been debunked

Good stuff Michael. More people need to know about all these quacks sucking up public money and doing nothing useful. I include Google in that, because whilst their motto was allegedly do no evil, what it really was, was pay no tax.

And now that my candidate Sleepy ? Joe is Da Prez- Elect, the chances are much better the Corporate Wellfare Chain will be whittled down to size. Especially if we win both of the Georgia Senate seats.

You are right to warn that quantum computing is a hoax. Any practical device is really an analog computer. I have published patents about how analog error correction coding can be 10000 time faster than digital. It’s just regular electronics, nothing mystical.

But your sketicism about General Relativity is misplaced. I have worked through that theory in detail and it is a mathematical inevitability. I suggest Barry Spain’s book “Tensor Calculus” is a good start and very readable. Moreover there is a huge amount of experimental verification that it is the correct explanation of “gravity”.

All points noted Paul. I know what you mean about an analogue computer. I saw one once that worked via flowing water.

.

But I’m not skeptical about General Relativity. I’m forever referring to Einstein about that. However I’m skeptical of what some call “modern” General Relativity, because it flatly contradicts Einstein’s General Relativity in some absolutely crucial ways, leading to things like the elephant and the event horizon, and the parallel antiverse. This is probably the most important thing: https://einsteinpapers.press.princeton.edu/vol7-trans/156?highlightText=%22spatially%20variable%22 . See what you think of https://physicsdetective.com/how-gravity-works/

Woit’s blog is worth a read:

.

https://www.math.columbia.edu/~woit/wordpress/?p=13256

.

There’s been some appalling fake physics publicized by Quanta Magazine, Google, and Nature. It’s all about simulating a wormhole on a quantum computer. It is bullshit squared. Words fail me.

I watched a t.v. show a few years ago on a thousands of year old Chinese water wheel/sluice gate that ran automatically on an analog program of nothing but different lengths of knotted ropes and wood cutout guides. Simply fascinating 👌.

Sounds interesting Greg. I tried to find it on the internet but could not. Chinese sluice came up with hits for Chinese sauce. And for Chinese restaurants near Seaton Sluice. Funnily enough I used to live less than two miles from Seaton Sluice.

The part of Shors algorithm that find the periods of a function seems like bullshit. Finding the period of a counter that counts to 3 is very different than counting to 10^40 for chrissakes

The whole thing is just one big tottering tower of bullshit, Hqm. Haver you seen the latest, about the quantum wormhole? Woit was talking about it: https://www.math.columbia.edu/~woit/wordpress/?p=13256. FFS, garbage like that fair takes the breath away.

It looks like the willow chip arrives just in the nick of time to update these hard working Hoosiers……..

https://www.tomshardware.com/desktops/indiana-bakery-still-using-commodore-64s-originally-released-in-1982-as-point-of-sale-terminals

Greg,

Those 40 year old computers, like the super-computers today, can present truth or lies. But the 40 year old ones can’t hide the truth like the modern ones can. Especially when used by the builders of “The whole thing is just one big tottering tower of bullshit” that Sir Detective mentioned above. I mean those who obfuscate physics (In Search Of Ocean Heat – 20).

The sleazy, used-car lot sales pitches still abound :

https://www.barchart.com/story/news/31987200/the-most-exciting-human-discovery-since-fire-world-economic-forum-touts-this-new-tech-as-sure-to-change-the-world?utm_source=rss&utm_medium=feed&utm_campaign=nordot

More Garbage In , Garbage Out in the Quantum Quack IT world…..

https://interestingengineering.com/science/us-quantum-computing-missing-particle

Thanks Greg: “In this approach, researchers work to secure quantum information by encoding it into the geometric properties of exotic particles called anyons”. FFS, this isn’t just quantum bullshit. This is quantum bullshit squared.