Everybody loves a mystery, and one of the best mysteries in physics is dark matter. As to where it starts, check out Brian Koberlain who said its origins can be traced to the 1600s. Or Alexis Bouvard who in 1821 said anomalies in the orbit of Uranus could be caused by dark matter. Or see the Ars Technica history of dark matter article by Stephanie Bucklin. She said in 1884 Lord Kelvin concluded that “many of our supposed thousand million stars, perhaps a great majority of them, may be dark bodies”. Or see the 2013 dark matter review paper by Jaan Einasto. He tells us about work by Ernst Öpik in 1915, by Jacobus Kapteyn and James Jeans in 1922, and by Jan Oort in 1932. Oort wrote a paper on what’s now known as the Oort discrepancy, saying the gravity of the visible stars in the galaxy wasn’t enough to explain their up and down motion. They go round the galaxy like merry-go-round horses. Of course, all the dark matter articles refer to Fritz Zwicky. He wrote a paper in 1933 on the redshift of extragalactic nebulae. He said the stars in the Coma cluster appeared to have a velocity dispersion of 1000 km/s. And that “if this should prove true, the surprising result would emerge that dark matter is present in very much greater density than luminous matter”. See the Discover magazine for more. Like Kelvin, Zwicky was ahead of his time. So much so that it was forty years before he was taken seriously. That’s when astronomers learned about flat galactic rotation curves.

Flat galactic rotation curves

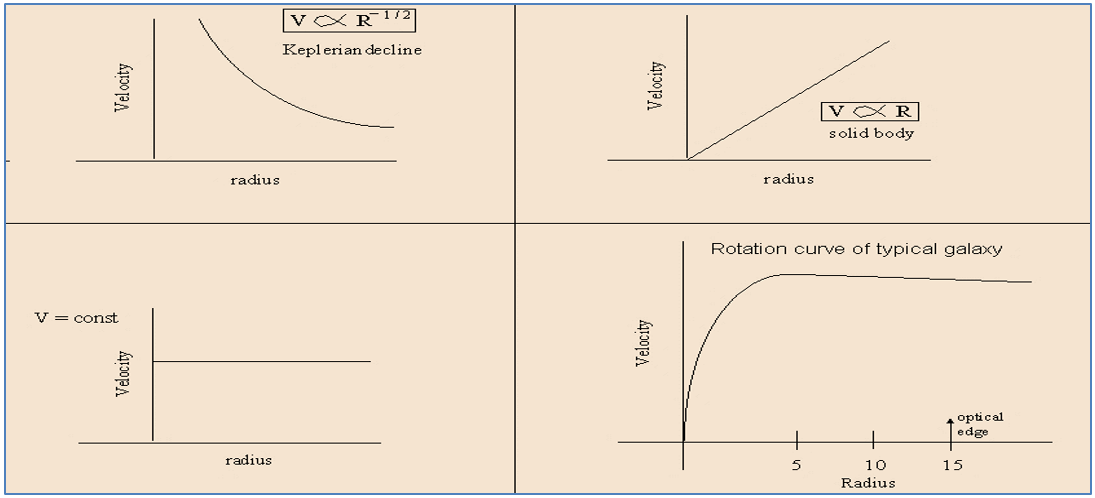

Martha Haynes of Cornell explains flat galactic rotation curves succinctly in her astro 2201 course. In a system where most of the mass is concentrated at the centre, the outer bodies orbit slower than the inner bodies. The solar system is such a system, hence its rotation curve exhibits a “Keplerian decline” in line with Kepler’s laws. Hence the average orbital speed of the Earth is 29.78 km/s as compared to 5.43 km/s for Neptune. If however the solar system was a solid body, the orbital speed would increase linearly with distance, so the rotation curve would be a straight slope. Alternatively if the mass distribution increased linearly with radius, the orbital speed would be constant regardless of distance, so the rotation curve would be a flat line. A typical spiral galaxy exhibits a mixture of linear and flat with a curve in between:

Images by Martha Haynes of Cornell, see her astro 2201 course

Images by Martha Haynes of Cornell, see her astro 2201 course

As Haynes says: “most galaxies have rotation curves that show solid body rotation in the very center, following by a slowly rising or constant velocity rotation in the outer parts”. Whilst the typical galactic rotation curve isn’t absolutely flat, people call it a flat galactic rotation curve because it’s fairly flat when you get away from the centre of the galaxy. It’s nothing like the Keplerian decline of the solar system curve, and it doesn’t match the mass distribution calculated from the visible stars. See the Wikipedia galaxy rotation curve article for the moot point: “the mass estimations for galaxies based on the light they emit are far too low to explain the velocity observations”. Something doesn’t add up.

Something doesn’t add up

The typical galactic rotation curve isn’t what was expected. If the only matter present was the matter we can see, the outer stars should be moving slower than the inner stars. However they’re moving as fast, or even faster. The discrepancy is significant. It “can be accounted for by assuming a dark matter halo surrounding the galaxy”.

Public domain M33 rotation curve image by Stefania deluca, see Wikipedia

Public domain M33 rotation curve image by Stefania deluca, see Wikipedia

See the Wikipedia dark matter article for some more history. It refers to Horace Babcock’s 1939 paper on the rotation curve of the Andromeda galaxy, and to work by Vera Rubin and Kent Ford starting in the 1960s: “Rubin found that most galaxies must contain about six times as much dark as visible mass”. See Rubin and Ford’s 1970 paper the rotation of the Andromeda Nebula from a spectroscopic survey of emission regions. You can also read how in 1972 David Rogstad and Seth Shostak published a paper on the rotation curves of five spiral galaxies. And how in 1975 Morton Roberts and Robert Whitehurst wrote a paper describing their use of the 21cm hydrogen line to “trace the rotational velocity of Andromeda to 30 kpc”. Hence by around 1980 “the apparent need for dark matter was widely recognized as a major unsolved problem in astronomy”.

Large scale structure

Something else that’s said to need dark matter is the large scale structure of the universe. See for example dark matter and structure formation in the universe by Joel Primack dating from 1997. Or simulating the joint evolution of quasars, galaxies and their large-scale distribution. That’s by Volker Springel and 16 other authors, and it dates from 2005. It’s all to do with the standard model of cosmology. The idea is that if the early universe consisted only of some uniform hot soup of protons and electrons and photons and neutrinos, then galaxies along with clusters and superclusters could not have formed so soon. Dark matter is said to be the vital ingredient for the formation of such structure. See the Wikipedia structure formation article where you can read this: “dark matter begins to collapse into a complex network of dark matter halos well before ordinary matter, which is impeded by pressure forces. Without dark matter, the epoch of galaxy formation would occur substantially later in the universe than is observed”. This could be construed as inference rather than evidence, particularly since there’s no hard scientific evidence for some aspects of the model, such as singularities or inflation. But even so it’s generally thought to strengthen the case for dark matter.

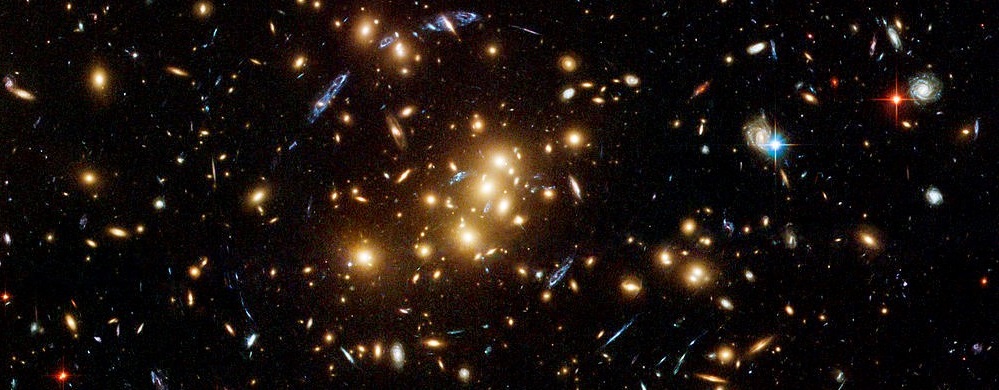

Gravitational lensing

There are other more direct reasons to suspect the existence of dark matter. Such as gravitational lensing. See the 2011 paper on the dark matter of gravitational lensing by Richard Massey, Thomas Kitching, and Johan Richard. They say “the most successful technique with which to investigate it has so far been the effect of gravitational lensing”. They give examples such as B2045+265 where there’s four images of a quasar, SDSSJ0946+1006 where there’s two Einstein rings, and the CL0024+17 cluster where multiple images of background galaxies are visibly distorted:

Public domain image by NASA/ESA/M J Jee (John Hopkins University) see Wikipedia commons

Public domain image by NASA/ESA/M J Jee (John Hopkins University) see Wikipedia commons

As per the CFHTLenS article what is gravitational lensing? we can identify multiple images of the same background galaxy via spectroscopy, and we can use the distortion to gauge the mass of the cluster. It appears to greatly exceed the mass of the visible matter. There is a definite discrepancy.

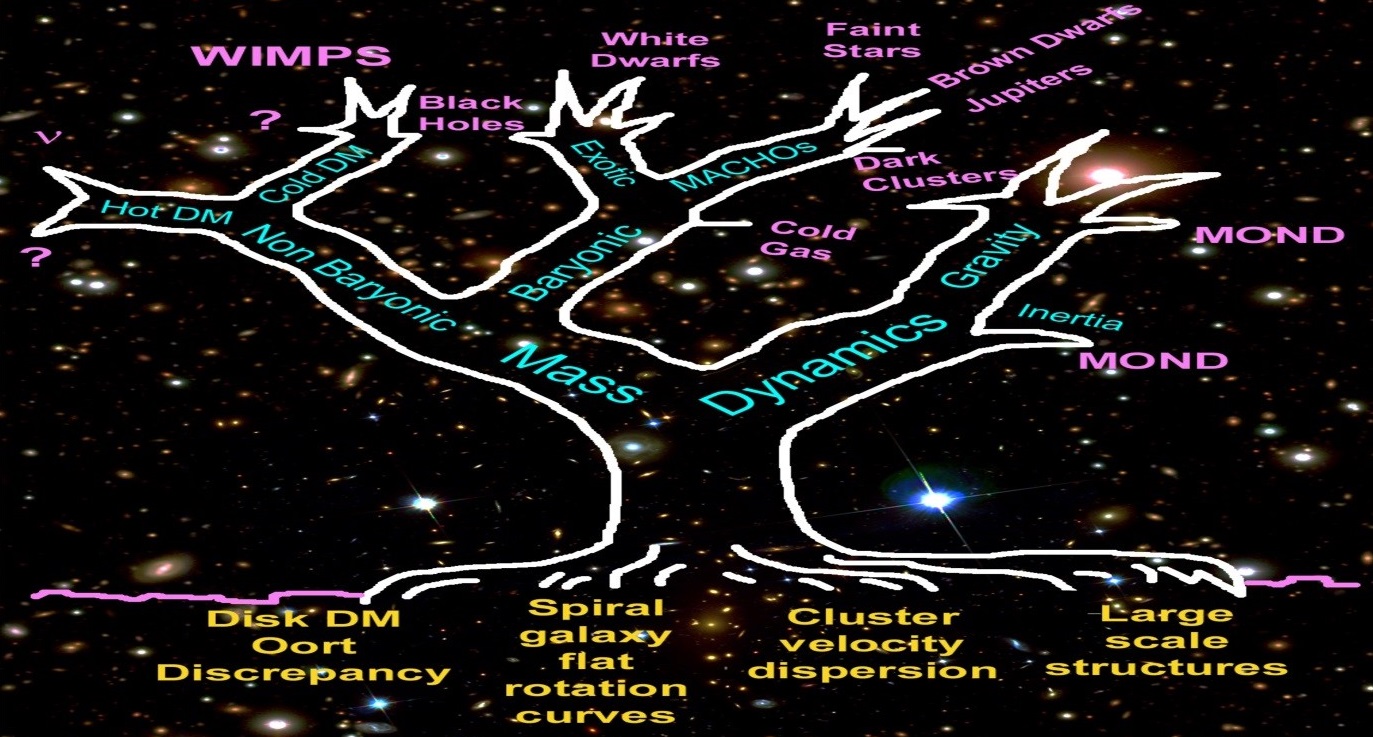

Modified Newtonian Dynamics

It is of course possible that the discrepancy is there for some other reason. It’s possible that dark matter is an incorrect assumption, and that some alternative theory is correct. One such theory is Mordehai Milgrom’s Modified Newtonian Dynamics, also known as MOND. Strictly speaking it’s a “phenomenological equation” rather than a theory per se. In a way it’s a retrofit that uses an extra factor, an acceleration constant a0 with a value of circa 1.2 x 10-10 m/s2. See Stacy McGaugh’s MOND pages for some interesting material:

Dark matter tree by Stacy McGaugh © 1995, 2013

Dark matter tree by Stacy McGaugh © 1995, 2013

Also see Milgrom’s original 1984 paper does the missing mass problem signal the breakdown of Newtonian gravity? It was co-authored with Jacob Bekenstein. Or check out Milgrom’s 2015 paper the road to MOND – a novel perspective. Milgrom says the dynamics are standard when system accelerations are larger than a0, but scale invariant when accelerations are below a0, such that the circular orbital speed becomes asymptotically constant. Whilst MOND matches some observations well, there are said to be some problems. Such as it doesn’t work too well for galaxy clusters, it’s allegedly disproven by the Bullet cluster, and allegedly doesn’t satisfy conservation laws. Milgrom concedes the galaxy clusters point in his Scholarpedia article, but rejects the Bullet cluster claim and says MOND can satisfy all the standard conservation laws. I have some sympathy for Milgrom, but would say the real issue for MOND is that there’s no explanatory cause. And of course that it’s challenging general relativity, which is just about the best-tested theory we’ve got – see Clifford M Will’s paper on the confrontation between general relativity and experiment. On top of that it’s arguably going against the grain of Einstein and the evidence. In the road to MOND Milgrom says MOND has “added appeal in that the measured value of a0 might have strong cosmological connotations”. He gives the expression 2πa0 ≈ cH0 ≈ c2(Λ/3)1/2 where H0 is the Hubble constant and Λ is the cosmological constant. The problem with that is that the circular acceleration of an orbiting body is only there because the speed of light is spatially variable. When a0 and H0 are constant but c is not, 2πa0 ≈ cH0 just doesn’t work for me. Nor does entropic gravity.

Entropic gravity

The Wikipedia dark matter article says Erik Verlinde’s entropic gravity provides a theoretical basis for MOND. But entropic gravity is “based on based on string theory, black hole physics, and quantum information theory, and it describes gravity as an emergent phenomenon that springs from the quantum entanglement of small bits of spacetime information”. There’s more in Verlinde’s 2010 paper on the origin of gravity and the laws of Newton. He says “space is emergent through a holographic scenario”, and “gravity is explained as an entropic force caused by changes in the information associated with the positions of material bodies”. And that “it is actually this law of inertia whose origin is entropic”. Ah, the holographic scenario and quantum information. Knowing what I do about gravity and mass and the wave nature of matter and why an electron falls down, I would venture to suggest that entropic gravity contradicts everything Einstein ever said. And that it’s foundation free and totally speculative, and has no evidential support whatsoever. Au contraire, see once more: gravity is not an entropic force by Archil Kobakhidze dating from 2011. It says quantum mechanical experiments on gravitationally-bound neutron states unambiguously disprove Verlinde’s approach. And yet such is the state of contemporary physics, entropic gravity just keeps on going like the Duracell bunny.

TeVeS

Another alternative theory is Jacob Bekenstein’s TeVeS. It’s described as a relativistic generalization of MOND. See Bekenstein’s 2004 paper relativistic gravitation theory for the MOND paradigm. It models MOND using a unit vector field, a dynamical scalar field, and a nondynamical scalar field. But like entropic gravity it lacks foundation and it’s going against Einstein and the evidence. See Einstein’s 1929 essay on the history of field theory. That’s where Einstein described a field as a state of space. He said “it can, however, scarcely be imagined that empty space has conditions or states of two essentially different kinds”. So it can scarcely be imagined that empty space has conditions or states of three different kinds. All at once. All in the same place. Also, see reportage such as the 2010 Physicsworld article galaxy study backs general relativity. It says this: “the observed ratio, dubbed EG, has a value of 0.39 ± 0.06. This agrees with general relativity, which predicts a value of 0.4. Crucially, the measurement rules out the tensor vector scalar (TeVeS) model of modified gravity, which has an EG of 0.22 and does not need dark matter”. So it looks like TeVes has been ruled out by experimental evidence. Bekenstein isn’t around any more to refute this claim or propose a modification, and it looks like there’s only one recent paper on the arXiv that’s supportive of TeVeS. A later paper says “the generalized TeVeS theory is excluded”.

f(R) gravity

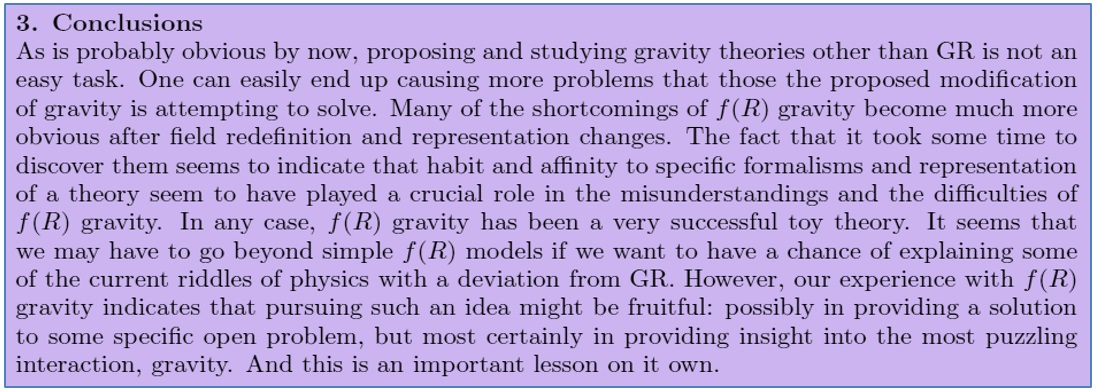

The 2010 Physicsworld article also mentions f(R) gravity. It says this: “the result does not, however, preclude the f(R) theory – which is more similar to general relativity and has EG values in the 0.328–0.365 range”. As the Wikipedia f(R) gravity article says, “f(R) gravity is actually a family of theories, each one defined by a different function of the Ricci scalar. The simplest case is just the function being equal to the scalar; this is general relativity”. It’s similar to general relativity because it’s a “generalization” of general relativity. Milgrom mentioned it on page 5 of his 2009 paper new physics at low accelerations (MOND): an alternative to dark matter. He said “we see that the modification of GR entailed by MOND does not enter here by modifying the ‘elasticity’ of spacetime (except perhaps its strength), as is done in f(R) theories and the like”. That sounds promising, especially if you’ve read the Einstein digital papers. The original 1970 f(R) paper by Hans Buchdahl is non-linear Lagrangians and cosmological theory, and it talks about φ rather than f. It also talks about extending Einstein’s theory in a cosmological context, which I think is good. However I disliked the claim that the universe oscillates between singular states. I also disliked the seeming lack of understanding of how gravity works, or how electrodynamics works. Again there seems to be no actual physics foundation, and because of this, f(R) gravity comes across as something of a mathematical speculation rather than a true development of general relativity. Perhaps that’s why Thomas Sotiriou was somewhat negative in his 2008 paper 6+1 lessons from f(R) gravity:

Fair use excerpt from 6+1 lessons from f(R) gravity by Thomas Sotiriou

Fair use excerpt from 6+1 lessons from f(R) gravity by Thomas Sotiriou

Perhaps that’s why the Wikipedia f(R) gravity article says “Palatini f(R) theories appear to be in conflict with the Standard Model, may violate Solar system experiments, and seem to create unwanted singularities”. You can read more in the Scholarpedia article F(R) theories of gravitation, by Salvatore Capozziello and Mariafelicia De Laurentis. They say f(R) gravity is a class of effective theories where the paradigm is that general relativity has to be extended. That’s to address ultraviolet and infrared scale shortcomings which are “essentially due to the lack of a final, self-consistent theory of quantum gravity”. They say the goal is “to encompass phenomena like dark energy and dark matter under a geometric standard”. But there’s no mention of electromagnetic curvature, or of the shortcomings of QED. More importantly they mention the speed of light only once, which means the general relativity they’re extending doesn’t look much like Einstein’s general relativity to me. All in all f(R) gravity doesn’t seem to be effective enough.

MACHOs

So, if modified or extended gravity theories don’t hit the spot, what other alternatives are there? There’s always Kelvin’s original idea that “many of our supposed thousand million stars, perhaps a great majority of them, may be dark bodies”. After all, stars don’t last forever. Stars undergo stellar evolution, and stellar remnants include white dwarfs, brown dwarfs, black dwarfs, neutron stars, and black holes. Why can’t dark matter consist of stellar remnants, plus planets, planetoids, and other plain vanilla matter? Matter that doesn’t shine? That we can’t see? Strictly speaking some of it does, but as far as we can tell, not enough. The stellar remnants et cetera are collectively known as MACHOs, and people have searched long and hard for them. But they just haven’t found enough. See the MACHO project: microlensing results from 5.7 years of LMC observations. It dates from 2000 and it’s by Charles Alcock and 25 other authors. It backs up what Katherine Freese, Brian Fields, and David Graff said in 1999 in limits on stellar objects as the dark matter of our halo: non-baryonic dark matter seems to be required. So does the EROS experiment, which started looking for sombre objets in 1990. See the 2007 paper limits on the MACHO content of the galactic halo from the EROS-2 survey of the Magellanic clouds. It’s by Patrick Tisser and 37 other authors. They too say there’s just not enough MACHOs to account for all the dark matter.

Molecular hydrogen

But there are other types of ordinary dark matter, such as molecular hydrogen gas H2. See the 2004 paper molecular hydrogen as baryonic dark matter by Andreas Heithausen. He says “an interesting alternative is molecular hydrogen (Pfenniger & Combes 1994; Gerhard & Silk 1996; Walker & Wardle 1998), because at most temperatures in the interstellar medium it cannot be observed directly”. Referencing papers include simulations of galactic disks including a dark baryonic component by Yves Revaz, Daniel Pfenniger, Francoise Combes, and Frédéric Bournaud dating from 2009. They say things like “if molecular gas fragmentation goes down to sub-stellar mass clumps staying cold, these clumps are unable to free nuclear energy and become stars”. They also refer to missing mass in collisional debris from galaxies by Bournaud et al dating from 2007. That talks about the way dwarf galaxies formed from galactic collisions ought to be free of non-baryonic dark matter. However observations suggest these dwarf galaxies contain twice as much dark matter as visible matter. Hence “this result most likely indicates the presence of large amounts of unseen, presumably cold, molecular gas”. So why isn’t this molecular hydrogen our dark matter? Or dim matter if you prefer?

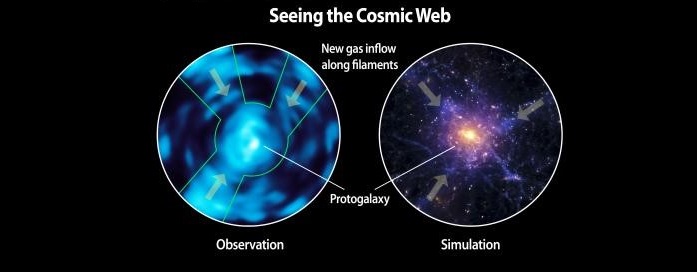

Lyman-alpha Image by Christopher Martin et al and Caltech

Lyman-alpha Image by Christopher Martin et al and Caltech

It isn’t because people have used carbon monoxide or formaldehyde to map molecular hydrogen. That doesn’t work because carbon and oxygen are created in stars, and there could be a lot of molecular hydrogen where there are no stars. It isn’t because people have mapped atomic neutral hydrogen using the 21cm hydrogen emission, see for example the HI4PI survey. That doesn’t work because it takes UV light to break down molecular hydrogen, and space is dark where there are no stars. Instead it’s because molecular hydrogen can be detected from its 900–1130Å absorption. See the 2001 paper molecular hydrogen in high-velocity clouds by Philipp Richter, Kenneth Sembach, Bart Wakker, and Blair Savage. Also see the 2016 paper molecular hydrogen absorption from the halo of a z~0.4 galaxy by Sowgat Muzahid, Glenn Kacprzak, Jane Charlton, and Christopher Churchill. It talks about the Lyman-alpha forest of spectral absorption lines. It’s observed when the light from a quasar passes through molecular hydrogen at different redshifts on its way to us. This Lyman-alpha forest “can be used to determine the frequency and density of clouds containing neutral hydrogen”. The conclusion is that molecular hydrogen is dark, and it’s matter, but there just isn’t enough of it.

Neutrinos

Neutrinos are said to be matter too. You can’t see neutrinos. So they’re dark. So they’re dark matter. However there aren’t enough of them either. They were a candidate once. But as per the Wikipedia dark matter article, “they can only supply a small fraction of dark matter”. See the 2001 paper whatever happened to hot dark matter? by Joel Primack. He says neutrinos are hot dark matter aka HDM. That’s because they move fast. He also says “for a few years in the late 1970s and early 1980s, hot dark matter looked like the best bet dark matter candidate”. He tells us how HDM models led to a top-down cosmological structure formation, wherein superclusters form before galaxies. He also tells us that such models were abandoned by the mid 1980s because they didn’t match observations of very old galaxies. Interestingly enough he also says we now have three independent ways of estimating the age of the universe, and their agreement suggests that GR works on the largest scales. We’ll come back to that. But for now he also says the maximum neutrino contribution is slight, and quotes a proportion Ων ≥ 10-3. See the universe review dark energy article which says they comprise circa 0.3% of the mass-energy of the universe. It is not nearly enough.

Sterile neutrinos

There has of course been talk of sterile neutrinos, but see the 2016 Nature article icy telescope throws cold water on sterile neutrino theory. Also see the 2017 Science article weird sterile neutrinos may not exist, suggest new data from nuclear reactors. And the 2017 Symmetry magazine article sterile neutrinos in trouble. Sterile neutrinos are hypothetical particles, and there’s no evidence for them whatsoever. Next.

Photons

Photons aren’t matter, and they have no rest mass. But their energy has a mass-equivalence. When you trap a photon in a mirror-box it increases the mass of that system, and the gravity too. In similar vein a cube of space has a greater mass-equivalence if it contains photons. Photons are light, but in a way they’re also dark. Look up at the Moon and note the space next to it. There’s a flux of photons in that space, but it’s dark. You can’t see the photons going past the Moon. However as per neutrinos, there just aren’t enough photons in the universe. The universe review dark energy article says they comprise only about 0.005% of the mass-energy of the universe. Again it is not nearly enough.

Axions

Another hypothetical particle is the axion. It was first proposed in 1977. But see the 2017 register article particle boffins calculate new constraints for probability of finding dark matter. The hunt for axions at the CAST experiment at CERN has been fruitless. It’s also been fruitless at the HAYSTAC experiment at Yale, and at the ADMX experiment in Washington state. And at the Fermi large-area telescope. This no-show is perhaps not surprising, because the axion was proposed to resolve a strong CP problem with QCD. See the Berkeley website on dark matter candidates. It says CP symmetry would prevent the neutron from having a large electric dipole moment, and that without it, it’s “very hard to understand why such a dipole moment has not yet been detected”. It would seem that the axion was proposed by physicists who didn’t have a clear picture of what a neutron is. And since incorporating the axion into electromagnetism “has the effect of rotating the electric and magnetic fields into each other”, these physicists didn’t have a clear picture of electromagnetism either. All in all whilst we can cut such exotic particles some slack for a few years, after forty years without detection one really has to ask whether there’s any foundation at all. The answer is no.

WIMPs

It’s similar for WIMPs. Weakly interacting massive particles were also proposed in 1977. See David Spergel’s overview in his 1998 article on particle dark matter. He refers to limits on masses and number of neutral weakly interacting particles by Piet Hut dating from 1977. The first WIMP was a fourth-generation neutrino, which has now been ruled out. Spergel also refers to R-parity and says supersymmetry is an elegant extension of the standard model of particle physics, something which transforms bosons into fermions and vice-versa. If however you have some idea of how pair production works, supersymmetry looks utterly superfluous. I am reminded of the selectron, which was proposed by physicists who don’t have a clue what an electron is. Hence I am not surprised that no WIMPs have been detected. See the recent limits section of the Wikipedia WIMPs article. The XENON1T experiment hasn’t found any WIMPs. Nor has LUX, or superCDMS, or HESS or Panda-XII or PICO-60. Hence the 2016 New Scientist article Dark matter no-show puts favoured particles on death row. Hence the March 2017 Stonybrook conference beyond WIMPs: from theory to detection. The blurb says WIMPs have been the dominant paradigm for three decades. And then it says this: “null results from direct and indirect detection experiments searching for dark matter, as well as a dearth of interesting candidates at the Large Hadron Collider at CERN, motivate the exploration of other well-motivated, non-WIMP dark matter candidates”. I’m afraid to say WIMPs are not long for this world. Because supersymmetry is a dead man walking, and before too long they’ll put a stake through its heart and bury it deep. Provided of course that nobody comes up with a five-sigma bump on a graph and says “I think we have it”.

None of the above

So, what’s left? See what Stacy McGaugh said in cold dark matter and experimental searches for WIMPs. He said this: “does that mean that dark matter is just the modern version of Æther? A ubiquitous, invisible substance that simply must exist?” Yes Stacy, it does. Because despite what you can read on the internet about some alleged consensus that dark matter is composed of subatomic particles, there is no evidence for any such particles, even after forty years. And because as Joel Primack said, we have three independent ways of estimating the age of the universe, and their agreement suggests that GR works on the largest scales. Yes, general relativity works. The first evidence for it came in 1919 after only three years. With a war on. That’s three years after Einstein said “the energy of the gravitational field shall act gravitatively in the same way as any other kind of energy”. The energy of the gravitational field isn’t made up of baryons, or WIMPs, or axions, or neutrinos, or MACHOs. It is none of the above.

Now I just can’t wait for the next article. Thank you for the very good job on this blog. Very informative and well written.

Happy Holydays

Many thanks Alessandro. Merry Christmas and a Happy New Year!

Shouldn’t you mention Quantised Inertia here? It is a bit like MOND but without the drawbacks and no new fudge factors. No Dark Matter required. Isn’t it a big warning sign that in order to fix our theory we have to invent something, and then when we don’t find this thing shouldn’t we question our theory not the experimental observations? (e.g. Wide Binaries) see here: https://physicsfromtheedge.blogspot.com/

Hi!

I am fascinated by your blog, i have to say it is very addictive!

I am following the works of Mike McCulloch on his theory of quantized inertia, which to me sounds like a great theory that explains e.g. galaxy rotation curves without the need for dark matter and has no adjustable parameters. I would be curious to hear about your opinion on that!

I found your blog because i was searching for more infos regarding Alexander Unzickers book “Einsteins Lost Key” (basically his VSL version of GR). I now wonder if the way Unzicker sees GR is compatible with they way you describe gravity. I am just an engineer with a curiosity in physics, so therefore excuse my perhaps trivial questions 🙂

Anyways, keep up the good work!

Many thanks Martin.

.

I’m afraid I don’t recall reading about Mike McCulloch’s theory of quantized inertia. I took a quick look at it here: https://quantizedinertia.com/about/ . I’m afraid to say I thought it was wrong. Inertia isn’t quantized. A photon has a non-zero inertial mass. This isn’t quantized, in that a photon can have any inertial mass you like. Of course, inertial mass is really a measure of energy. Then energy E=hf, and the h is what gives the quantum nature of light, because it’s the same for all photons. For a cherry on top, I don’t think Unruh radiation exists.

.

I know Alexander Unzicker. He’s a friend. But I haven’t read that book I’m afraid. My bad. I’ve just bought it off Amazon.co.uk. I seem to recall thinking this wasn’t right: “that would have explained the origin of gravity by referring to distant masses in the universe”. Einstein was a bit of a Mach fan, but as far as I know he ended up dropping Mach’s principle. I like to think that I describe gravity much as Einstein did, though I don’t think Einstein ever employed the closed-path wave nature of matter. He did however employ the local speed of light in the formal side of the theory. I think that was a mistake myself. Would it be the same theory if he hadn’t? Mathematics is a language, and there’s more than one way to say the same thing, so I’d say yes.

The Suncell people are claiming the “hydrino” is Dark Matter:

https://brilliantlightpower.com/suncell/

Sadly Richard, the Suncell people don’t even understand the electron. See this. If they don’t understand the electron they don’t understand the proton. And if they don’t understand th electron and the proton they don’t understand the hydrogen atom. They don’t understand why the binding energy is -13.6ev. So they’re winging it with the hydrino. Which, by the way, nobody has ever seen. On top of that, how can something comprised of a proton and an electron be dark matter? They’re winging it with their dark matter claim too.

Hi PD – first of all kudos for the blog, which makes for a fascinating read.

Regarding Mills’ work, perhaps there’s a bit more to it than you sketch just here.

“Nobody has ever seen it” is actually not that bad for something that purports to be dark matter. For those willing to look, there is some literature with supporting evidence for hydrinos.

I think it’s fair to say that Mills has his own unique understanding of the electron, which evidently is not the same as yours. It does, however, appear to produce a wide range of atomic and molecular calculations among which the binding energies of every electron in virtually every molecule imaginable, as well as the relative birth weights of the electron family to within experimental precision. Perhaps all models are wrong, but some are useful…

The rhetorical: “how can something comprised of a proton and an electron be dark matter?” indicates that you may be missing some basic elements of Mills’ theory. A helpful one-page summary of Mills’ key findings can be found online. It reads, under head-on 6. Dark Matter : “A unique feature of hydrino atoms is that they lack the ability to form excited states… Hydrino atoms are invisible; unlike all other forms of matter, they do not absorb and emit light. This makes them a very convincing candidate for dark matter.”

I went Wikipedia and read an very interesting article about Mill’s companies and investment hoard. I tend to agree with the authors of said article. Lots and lots of money has been raised, but absolutely no credible products have been built and delivered for public inspection, for years on end!

Like the old saying goes : If it sounds just toooooo good to be true, then it’s probably a con.

Jay: I’m sorry, but I just don’t buy that supporting evidence, and I think there’s a lot wrong with Mills’s physics. I ought to go through some of it and give you some details I suppose, but I can’t right now. Apologies again.