The black hole firewall is a relatively recent idea. On Wikipedia you can read how it “was proposed in 2012 by Ahmed Almheiri, Donald Marolf, Joseph Polchinski, and James Sully as a possible solution to an apparent inconsistency in black hole complementarity”. Their proposal is known as the AMPS firewall, and the title of their paper is black holes: complementarity or firewalls?

They cannot all be true

They start by saying “we argue that the following three statements cannot all be true: (i) Hawking radiation is in a pure state, (ii) the information carried by the radiation is emitted from the region near the horizon, with low energy effective field theory valid beyond some microscopic distance from the horizon, and (iii) the infalling observer encounters nothing unusual at the horizon”. That sounds like a good start. They also refer to the black hole information paradox, wherein a black hole is said to destroy such things as baryon number. In physics if you hit a paradox it means something’s wrong somewhere, as per reductio ad absurdum. They then talk about black hole complementarity, which is where information is allegedly both “reflected at the event horizon and passes through the event horizon”. They say that this can’t be right, and that there are some “sharp and perhaps unpalatable alternatives”. They also say “the tensions noted in this work may lead the reader to wonder whether even the most basic coarse-grained properties of Hawking emission as derived in [34] are to be trusted”. This is promising stuff. Sadly they miss the trick by saying “the thermodynamic picture of black holes now rests on many pillars that remain intact”. But on the plus side they do conclude that “the most conservative resolution is that the infalling observer burns up”. This sounds reasonable given that the distant observer allegedly sees Susskind’s elephant get thermalized. So it sounds reasonable that the black hole is surrounded by a “ring of fire”:

Ring of fire image by Sam Chivers from New Scientist

Ring of fire image by Sam Chivers from New Scientist

There’s a reader-friendly article on all this by Zeeya Merali in the Huffington post. It’s called black hole ‘firewall’ theory challenges Einstein’s equivalence principle. She tells us that the team’s verdict shocked the physics community because their firewall would violate a foundational tenet of physics known as the equivalence principle. She quotes Raphael Bousso saying the firewall idea “shakes the foundations of what most of us believed about black holes”. She quotes Steve Giddings describing the situation as “a crisis in the foundations of physics that may need a revolution to resolve”. She also talks about the monogamy of entanglement.

The monogamy of entanglement

The monogamy of entanglement is another paradox, wherein the emitted Hawking radiation particle can’t be entangled with two systems at once. It can’t be entangled with the particle that fell into the black hole, and with the Hawking radiation that was previously emitted. As I said, in physics if you hit a paradox it means something’s wrong. If you hit two, it means something’s definitely wrong. As to what, well, there’s the rub. Merali tells us that “to escape this paradox, Polchinski and his co-workers realized, one of the entanglement relationships had to be severed”. She says they were reluctant to abandon the entanglement needed to encode information in the Hawking radiation, so they severed the binding between the escaping particle and its infalling twin. She quotes Polchinski saying “it’s a violent process, like breaking the bonds of a molecule, and it releases energy”. That’s an odd thing to say, because energy is released when bonds are formed, not when they’re broken. Merali also quotes Ted Jacobson saying “it was outrageous to claim that giving up Einstein’s equivalence principle is the best option”. That’s odd too. Because it isn’t outrageous at all.

Penrose did his own thing

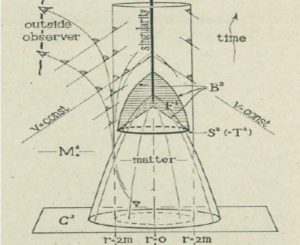

As to why, I need to rewind. In 1964 Roger Penrose wrote a paper on gravitational collapse and space-time singularities. He started by saying “the discovery of the quasi-stellar radio sources has stimulated renewed interest in the question of gravitational collapse”. He went on to say this: “it has been suggested by some authors [1] that the enormous amounts of energy that these objects apparently emit may result from the collapse of a mass of the order of (106 – 108)MⓍ to the neighborhood of its Schwarzschild radius”. That sounds reasonable too, and it is in line with the mass deficit. But oddly enough, Penrose appears to have ignored it. He also said “the general situation with regard to a spherically symmetrical body is well known [2]”. He was referring to Oppenheimer and Snyder’s 1939 frozen star paper on continued gravitational contraction. But he ignored that too. Because he was pushing point-singularities:

Image from Gravitational collapse and space-time singularities by Roger Penrose

Image from Gravitational collapse and space-time singularities by Roger Penrose

He wasn’t interested in what Einstein said about why light curves. Or in Einstein’s 1939 paper. That’s where Einstein said light rays “take an infinitely long time (measured in “coordinate time”) to reach the point r = μ/2”. Penrose didn’t even seem interested 2 years later in his 1966 Adams prize essay where he appealed to authority by referring to Eddington-Finkelstein coordinates. Even though neither Eddington nor Finkelstein “ever wrote down these coordinates or the metric in these coordinates”. Most of all, Penrose didn’t seem interested in how gravity works and why matter falls down or what a black hole really was. It’s as if he was doing his own thing, playing fast and loose with what had gone before.

The frozen star was frozen out

Hawking seems to do have done something similar. In a brief history of time he referred indirectly to Oppenheimer and Snyder’s 1939 frozen star paper. But he told a twisted tale. He said this: “Oppenheimer’s work was then rediscovered and extended by a number of people. The picture that we now have from Oppenheimer’s work is as follows. The gravitational field of the star changes the paths of light rays in space-time from what they would have been had the star not been present. The light cones, which indicate the paths followed in space and time by flashes of light emitted from their tips, are bent slightly inward near the surface of the star. This can be seen in the bending of light from distant stars observed during an eclipse of the sun. As the star contracts, the gravitational field at its surface gets stronger and the light cones get bent inward more. This makes it more difficult for light from the star to escape, and the light appears dimmer and redder to an observer at a distance. Eventually, when the star has shrunk to a certain critical radius, the gravitational field at the surface becomes so strong that the light cones are bent inward so much that light can no longer escape”. That’s not what Oppenheimer and Snyder said. Hawking must have known this, and about the Physics Today article introducing the black hole by Remo Ruffini and John Wheeler. They said the collapse is continuing because even after an infinite time, as measured by a distant observer, the collapse is still not complete. That’s why they said “in this sense the system is a frozen star”. But Hawking doesn’t even mention the word frozen. The frozen star was frozen out.

Einstein was frozen out too

Einstein was frozen out too. The word Einstein occurs 62 times in a brief history of time. But Hawking didn’t refer to Einstein saying why light curves. Nor did he refer to Einstein’s 1939 paper about light rays taking forever to reach the event horizon. Hawking was appealing to Einstein’s authority whilst flatly contradicting him. Which is why Hawking got it wrong in his 1976 paper on the breakdown of predictability in gravitational collapse. That’s where he said this: “as was shown in a series of papers by Penrose and this author3-6, a space-time singularity is inevitable in such circumstances provided that general relativity is correct”.

General relativity is correct

General relativity is correct, it’s one of the best-tested theories we’ve got. The evidence started coming in in 1919 after a mere three years, with a war on, courtesy of Arthur Eddington. Compare and contrast with Hawking radiation. Not only that, but the evidence of optical clocks says the speed of light is spatially variable. That’s what Einstein said time and time again. But Hawking didn’t refer to that. Instead he referred to the principle of equivalence and said gravity is always attractive, and that “this leads to singularities in any reasonable theory of gravitation”. But he didn’t refer to the event horizon as a singularity like Einstein did. Instead he said the “apparent” singularity at the event horizon was “simply due to a bad choice of coordinates”. His general relativity wasn’t Einstein’s general relativity. It was some ersatz popscience version of the real thing.

But the waterfall analogy is not

That’s why Hawking talked about “such a strong gravitational field that even the ‘outgoing’ light rays from it are dragged back”. He didn’t realise that light goes slower when it’s lower, so the ascending light beams speeds up. So in a strong gravitational field, it doesn’t get dragged back at all. It speeds up all the more. That’s what Einstein’s variable speed of light says, that’s what optical clocks say, and that’s what general relativity says. Yes, general relativity is correct, but the waterfall analogy is not. We do not live in some Chicken-Little world where space is falling down:

Image from Sunil Bisoyi’s blog, said to be from a Leonard Susskind article in Scientific American April 1999

Image from Sunil Bisoyi’s blog, said to be from a Leonard Susskind article in Scientific American April 1999

That means Hawking’s understanding of gravity and singularities was not correct. Which is why he talked about the event horizon not as a place where the coordinate speed of light is zero, but as a surface which ”emits with equal probability all configurations of particles”. Spontaneously, like worms from mud. Regardless of the infinite time dilation. Regardless of the very reason why the light can’t get out. And then without a trace of irony, Hawking talked about a “principle of ignorance”, and said “something obviously goes badly wrong”. It did indeed.

Something obviously goes badly wrong

As for when, it looks like things started to go wrong after Einstein died, in the “golden age“ of general relativity in the sixties. Things were definitely badly wrong by 1983 when Gerard ‘t Hooft wrote his paper on the ambiguity of the equivalence principle and Hawking’s temperature. He said the laws governing a system in a gravitational field can be obtained by viewing the field as generated by an acceleration relative to an inertial frame. He said “strictly speaking this procedure only works if the gravitational field is homogeneous”. It’s as if he’d never read Einstein’s Leyden Address. That’s where Einstein described a gravitational field as a place where space was “neither homogeneous nor isotropic”. Furthermore ‘t Hooft also said “by homogeneous we mean that there is an inertial frame in which the entire gravitational field disappears”. It’s as if he’d never read Einstein’s Relativity: The Special and General Theory. That’s where Einstein said it’s “impossible to choose a body of reference such that, as judged from it, the gravitational field of the Earth (in its entirety) vanishes”. As Peter Brown points out in Einstein’s gravitational field, a uniform gravitational field has no tidal forces and thus no space-time curvature, so it’s a contradiction in terms. So it’s not a good idea for ‘t Hooft to claim that uniform gravitational fields “occur in Nature almost by definition” when they don’t occur in nature at all. Nor is it a good idea to claim that Hawking radiation “originates in a region where the collapsing matter has a close to infinite kinetic energy per particle”. Not when gravity doesn’t add any energy to a falling particle. Gravity converts potential energy, which is mass-energy, into kinetic energy. Hence when the latter is radiated away, the particle is left with a mass deficit. And last but not least, it is not a good idea to use the wrong equivalence principle.

The wrong equivalence principle

In his 1993 paper the stretched horizon and black hole complementarity, Leonard Susskind said “the belief is based on the equivalence principle”, and “it seems certain that a freely falling observer experiences nothing out of the ordinary when crossing the horizon”. Only it doesn’t. The equivalence principle doesn’t say the falling observer experiences nothing out of the ordinary when he encounters the hard unyielding ground, and it doesn’t say the falling observer experiences nothing out of the ordinary when he encounters a black hole. As for what it does say, see Kevin Brown’s mathspages article on the many principles of equivalence. He tells us how the definition of the equivalence principle has undergone several changes over the years, and that “the modern statement of the strong equivalence principle, of the assertion that the laws of physics are the same for all frames of reference (i.e., independent of velocity) is also conceptually quite distinct from the original meaning of Einstein’s equivalence principle”. The story starts with Einstein’s happiest thought in 1907. That’s when he thought of an observer in free-fall from the roof of a house, and realized the observer wasn’t feeling any force. It was as if there was no force on the observer. Or on anything falling alongside him. Setting aside air resistance and any surrounding buildings, falling would feel like inertial motion without acceleration. Hence page 149 of MTW describes the equivalence principle thus: “in a freely falling (non-rotating) laboratory occupying a small region of spacetime, the laws of physics are those of special relativity”. You can read the same thing on the Wikipedia equivalence principle article: “the outcome of any local non-gravitational experiment in a freely falling laboratory is independent of the velocity of the laboratory and its location in spacetime”. However in 1939 Einstein said light rays take an infinitely long time to reach the event horizon. That doesn’t square with a freely-falling observer experiencing “nothing out of the ordinary when crossing the horizon”. That’s because it isn’t Einstein’s principle of equivalence. John Norton explained what was in his 1985 paper what was Einstein’s principle of equivalence? He said it was a special relativity principle that dealt only with fields that could be transformed away. This means Einstein was talking about the accelerating observer, not the inertial observer. This is why Kevin Brown says the term equivalence principle should be reserved for assertions about the “sameness” of the effects of gravitation and extrinsic acceleration. And why Norton talked of an old view and a new view, and said “the equivalence of all frames embodied in this new view goes well beyond the result that Einstein himself claimed in 1916”.

The Einstein equivalence principle

Chung Lo said essentially the same in 2015 in rectification of general relativity. He said “many were confused that his 1916 equivalence principle was the same 1911 assumption of equivalence that has been proven invalid”. He blames Wheeler and others: “Wheeler led his school at Princeton University while his colleagues, Sciama and Zel’dovich (another H-bomb maker) developed the subject at Cambridge University and the University of Moscow”. Dennis Sciama strongly influenced Penrose and of course Hawking. Lo goes on to say “the misinterpretations of the theory and errors such as the singularity theorems have been accepted as part of the faith”. It sounds like heavy stuff, but it’s true. Take another look at the Wikipedia equivalence principle article: “this was developed by Robert Dicke as part of his program to test general relativity. Two new principles were suggested, the so-called Einstein equivalence principle and the strong equivalence principle, each of which assumes the weak equivalence principle as a starting point”. The Einstein equivalence principle isn’t Einstein’s equivalence principle. The Wikipedia article also says “the outcome of any local non-gravitational experiment in a freely falling laboratory is independent of the velocity of the laboratory and its location in spacetime”. Even though Einstein didn’t. The article also says the fine-structure constant must not depend on where it’s measured. Even though the fine structure constant is a running constant that varies with energy density, and so must vary with gravitational potential. Solar probe plus was going to test this, but it’s now a purely heliophysics mission. As to why a fine structure experiment isn’t on board is a mystery to me. Something else that’s a mystery to me is why anybody should take the wrong principle of equivalence so far that they end up with trips to the end of time and back, and elephants in two places at once.

The right equivalence principle

As for the right equivalence principle, I’m afraid that’s been taken too far too. In the Wikipedia equivalence principle article you can read how being on the surface of the Earth is like being inside an accelerating spaceship. That’s the equivalence principle most people know about. Look at google images, and that’s what you see:

Image by various contributors and Google

Image by various contributors and Google

Note however that the two situations are not exactly the same. Standing still in inhomogeneous space is not identical to accelerating through homogeneous space. When you’re in your windowless room either on Earth or in the spaceship, light curves and matter falls down. But like Wikipedia says, the room has to be small enough so that tidal effects are negligible. As for how small, note that Einstein said the special theory of relativity is “nowhere precisely realized in the real world”. It’s only valid “in the infinitesimal”. Your room has to be an infinitesimal room for the principle of equivalence to be exactly valid there. This is why on page 20 of Einstein’s gravitational field, Peter Brown quoted John Synge talking about the midwife. Synge said the principle of equivalence performed the essential office of midwife at the birth of general relativity. But then he suggested that “the midwife be buried with appropriate honours”. See pages ix and x in the preface to Synge’s relativity: the general theory. The bottom line is that the principle of equivalence is only valid in an infinitesimal region. It’s only valid in a in a region of zero size, which is no region at all. That’s why in the Einstein digital papers you can read that “it was presented as a heuristic principle for finding the theory rather than as one of the theory’s main tenets”. As for why people think it’s one of the theory’s main tenets is another mystery.

Gamma ray bursts

Yet another mystery is gamma-ray bursts. See for example the 2008 NASA article gamma-ray bursts: the mystery continues by Tony Phillips and Dauna Coulter. Or see the NASA HEASARC article the mysterious gamma ray bursts by Joslyn Schoemer et al. They say “one of astronomy’s most baffling mysteries is the undiscovered source of sudden, intense bursts of gamma rays”. Hawking referred to them in a brief history of time. He said “in fact bursts of gamma rays from space have been detected by satellites originally constructed to look for violations of the Test Ban Treaty. These seem to occur about sixteen times a month and to be roughly uniformly distributed in direction across the sky”. He was hinting that they might be caused by black hole explosions associated with Hawking radiation. Don’t forget that when Penrose wrote his epoch-making singularities paper in 1964 he said “the discovery of the quasistellar radio sources has stimulated renewed interest in the question of gravitational collapse”. Of course quasars aren’t quite the same as gamma-ray bursts, and the latter weren’t declassified until 1973. But they’re the same kettle of fish, to do with black holes and gravitational collapse. This is the sort of stuff that resurrected interest in general relativity. Only the real mystery is why gamma ray bursts are still a mystery. Especially when Friedwardt Winterberg explained them in 2001.

Winterberg’s proposal

See the 2013 AMPS paper an apologia for firewalls. Tucked away in the conclusion is footnote 31, containing a reference 87 to Winterberg’s 2001 paper gamma ray bursters and Lorentzian relativity. Winterberg talks about the direct conversion of an entire stellar rest mass into gamma ray energy. The nub of it is this: “if the balance of forces holding together elementary particles is destroyed near the event horizon, all matter would be converted into zero rest mass particles which could explain the large energy release of gamma ray bursters”. See the Wikipedia gamma ray burst article and note that “a typical burst releases as much energy in a few seconds as the Sun will in its entire 10-billion-year lifetime”. Also see the emission mechanisms section where you can read that: “some gamma-ray bursts may convert as much as half (or more) of the explosion energy into gamma-rays”. As I write Winterberg’s proposal is mentioned in the progenitors section of the Wikipedia article, albeit in a single line.

Unfamiliar territory

Perhaps Winterberg’s gamma ray bursters and Lorentzian relativity hasn’t received much attention because it looks like unfamiliar territory. For example Winterberg talked about an ether. Some people might not like that because Einstein is said to have done away with the ether. But some people don’t know that in 1920 Einstein referred to space as the ether of general relativity. Winterberg also said “the event horizon appears first at the center of the collapsing body, thereafter moving radially outward”. Some people might not like that because it sounds back to front. But some people don’t know about frozen stars growing like hailstones: You’re a water molecule. You alight upon the surface of the hailstone. You can’t pass through this surface. But you are presently surrounded by other water molecules, and eventually buried by them. So whilst you can’t pass through the surface, the surface can pass through you. Winterberg also said in the limit v = c, massive particles become unstable and break up into zero rest-mass particles, and that “for v > c there can be no static equilibrium”. Some people might not like that because massive particles can never reach the speed of light. Only they can, and I’m not talking about Cherenkov radiation. Back in the day when John Michell was talking about dark stars, the idea was that a dark star has an escape velocity that “equals or exceeds the speed of light”. You can flip this around and reason that if I dropped you at some great distance from a black hole, you would survive the fall to the event horizon. At the event horizon you would be moving at the speed of light, or faster. Especially since you could have fired your gedanken boosters and accelerated towards the black hole. Then you might think you could go even faster as you continued to fall towards the point-singularity. But you can’t. You can’t go faster than light because of the wave nature of matter. We can make electrons out of light in pair production, and we can diffract electrons. You are made out of electrons. And other things too, but the same principle applies. You are made of matter, in a very real way matter is made of light, and matter cannot go faster than the light from which it is made.

When matter falls into a black hole

By now you may have spotted the fly in the ointment. The crucial point is this: matter falls down when it’s in a place where there’s a gradient in the speed of light. That’s what Einstein said, and that’s what optical clocks say too. It’s because of the wave nature of matter. When you fall towards a black hole it’s because the speed of light is reducing. The reducing speed of light is transformed into your downward motion. The more it reduces the faster you fall. You fall towards the event horizon, faster and faster. All the while the speed of light is getting slower and slower. Falling bodies don’t stop accelerating, and they don’t slow down. The descending light beam does, but your falling body does not. So there has to be some crossover point where you would end up going faster than the local speed of light. Your velocity v would exceed the “coordinate” speed of light c at that location. Can you fall faster than the local speed of light? No. Relativity says no, the wave nature of matter says no, and so do quasars. So you don’t have to worry about spaghettification. Or about the AMPS firewall. Matter cannot go faster than the light from which it is made, so something else happens. Something more dramatic. Something Einstein should have predicted in his 1939 paper where he said light rays and material particles take an infinitely long time to reach the event horizon:

Public domain image by NASA, see dying supergiant stars implicated in hours-long gamma-ray bursts

Public domain image by NASA, see dying supergiant stars implicated in hours-long gamma-ray bursts

BOOM! A gamma ray burst happens. When I talked about dropping you such that you survived the fall to the event horizon, I was being economical with the truth. Because you were never going to survive the fall to the event horizon. Because you’re made of electrons and things, and gravity converts potential energy which is mass-energy into kinetic energy. This reduces the mass-energy of those electrons and things, and you can only take this so far. The electron is a 511keV electron because h is what it is, and only one E=hf energy yields the stable spin ½ standing-wave Ponting-vector thing that we call an electron. Reduce the mass-energy and it’s like trying to make a 411keV electron. The rotational energy flow cannot confine itself. The electron cannot persist as an electron. It’s something like stretching a helical spring. You can’t stretch it straighter than straight. When you try to do so, it breaks. In similar vein the wave that is the electron breaks. And because you are made of electrons, you are thermalised and ionised and marmalised. You are annihilated in a catastrophic 100% conversion of matter into energy. Every electron, every proton, and every last neutron is ripped apart and rendered down to gamma photons. And doubtless neutrinos, but they depart at the speed of light too so what’s the difference? The difference is that you do not encounter a firewall at the black hole event horizon. Because you are your own firewall, before you ever get to that event horizon.

Dark comments

You can take comfort in the conclusion that photons and neutrinos depart in different directions. Think of the right hand rule and the left-hand rule, and stick your fingers out in orthogonal directions. Charge isn’t conserved, but angular momentum is. The directions of the resultant photons and neutrinos depend on the charge of the original particle. You can also take comfort in the conclusion that there is no Hawking radiation, and no information paradox either. I don’t, because I don’t care about information. What I care about is why all this isn’t common knowledge. It’s all so straightforward. It’s all so obvious, so simple when you’ve read the Einstein digital papers and know that the speed of light is not constant. But when I look around on the internet, I don’t see people talking about the Einstein digital papers. What I see is Winterberg making dark comments about string theorists and groupthink and priority and censorship. We’ll come back to that another time, because it’s important. But for now there’s some other dark stuff we need to look at. Called dark matter.

Hi John,

reading your interpretation of Winterberg, it seems that you assume that an infalling body’s velocity hits the speed of light before the event horizon, because the speed of light measured in the Schwarzschild coordinates get smaller when getting closer to the event horizon. But the same is true for the body’s velocity.

According to https://physics.stackexchange.com/a/170506/73067, the velocity in Schwarzschild Coordinates for an object falling towards the event horizon is

$$v = \left(1 – \frac{r_s}{r}\right)\sqrt{\frac{r_s}{r}}c_0$$

where $c_0$ shall be the speed of light far from any gravitation or, which is the same, measured locally, what is defined to be the “constant” speed of light.

According to https://physics.stackexchange.com/a/77280/73067 we have

$$\frac{c}{c_0} = 1-\frac{r_s}{r}$$

Inserting the previous formula we get

$$ v = \frac{c}{c_0}\sqrt{\frac{r_s}{r}}c_0 = c\sqrt{\frac{r_s}{r}} $$

which means the velocity of a massive body $v$ as well as the coordinate speed of light $c$ approach each other and become equal (and zero) exactly at the Schwarzschild radius. Winterberg actually uses the coordinate speed of light seeminly for a massive body too. I tend to rather believe John Rennie providing the first formula above.

It’s misapplied, Harald. The important point to note is that a test body falls down because it’s in a place where there’s a gradient in the speed of light. If you drop the body at elevation A, it accelerates such that its falling speed at elevation B is related to the difference in the speed of light at the two elevations. This is directly related to optical clock rates at the two elevations. This acceleration continues as the body falls past elevations C, D, E et cetera. At any pair of elevations you care to choose, the lower clock goes slower, and the body accelerates as it falls from one to the other.

.

Note how John Rennie says “it’s still a bit involved for non-nerds so I’ll just quote the results”. He’s ducked the crucial issue, which is that at any elevation on his first chart you can drop the test body and it falls down, faster and faster. It doesn’t slow down. Falling bodies do not do this. They don’t fall slower because of that thing we label as gravitational time dilation, where optical clocks go slower when they’re lower. They fall because of it. Faster and faster. As for John Rennie’s second chart, it ignores the very reason why the body falls down – because there’s a gradient in the speed of light. The event horizon isn’t the place where escape velocity is the speed of light. It’s the place where the speed of light is zero.

.

Why don’t you ask a new question on stack exchange? Set the scene by selecting 10 locations at varying distances from a black hole, and at each location you drop a test body into the black hole. Ask if any of these test bodies (falling vertically in vacuo) slow down, or don’t fall down at all. PS: see my exchange with Harry McLaughlin who became very abusive when I talked about this on Quora. People who think they’re the experts don’t like to be challenged on this sort of thing, so mind how you go.

For the record, I’ve just posted this comment on Sabine Hossenfleder’s blog post Don’t ask what science can do for you.

.

“What a load of tosh Sabine. Gambling won’t save science. Fundamental research will, because when you read the old papers you see the low-hanging fruit and you understanding the basics. The problem is that people are overly invested in bad science, and they’ve painted themselves into a corner with it. I’m not talking about SUSY, I’m talking about the Standard Model. It doesn’t explain what the photon is, how pair production works, or what the electron is. It doesn’t even explain how a magnet works, let alone the nuclear force or gravity. But nobody will admit these omissions, especially not Woit, and neither will you. And you will not permit any comments pointing that out, or pointing out that people need to do that fundamental research. So don’t give me Don’t ask what science can do for you. Not when you should Ask what you can do for it. Like I’ve been doing with my physics detective articles. You’re just carping, and censoring people like me whilst promoting yourself and peddling your book. Your contribution is negative. Like Woit, you ain’t part of the solution. You’re part of the problem”.

.

I expect it will never see the light of day, like most of my comments. If it does, I shall eat humble pie and delete this comment.

.

PS: I changed the name of this article from Firewall! to Gamma ray bursts, and now I’ve changed it back again.

The Physics Detective is right: In Feynman’s “The Character of Physical Law” regarding the finding of new physical laws, he states the following: “First we guess it. Then we compute the consequences of the guess… Then we compare the result of the computation with experiment to see if it works. If it disagrees it is wrong. In that simple statement is the key to science. It does not make any difference how beautiful your guess is, how smart you are, who made the guess, if it disagrees with experiment it is wrong”. For the phenomenon of gamma ray burst- firewalls, where in the fraction of a second the rest mass energy of 50 solar masses, for example, is converted into radiation, we cannot make an experiment, but the Gods do it for us. And their experiments show that the explanation as the weak, never observed Hawking radiation, is wrong, and with it all the beautiful 10^500 -11 dimensional String/M theories, including Susskind’s wormholes to other galaxies. F. Winterberg

Thanks Friedwardt. For the life of me I don’t know why more people don’t know about your 2001 paper. You deserve a trip to Stockholm for that. And yet here we are 18 years later, and gamma ray bursts are still said to be mysterious.

what is the size in order of magnitude of the firewall?

It’s big, Nero. Much bigger than the event horizon. Imagine you’re a long way from the event horizon, and you drop a brick into a black hole. If the gravitational field was uniform, the brick would reach the local speed of light and erupt into a gamma ray burst half way between you and the black hole. Then if you threw the brick, it would erupt into a gamma ray burst sooner. Of course, the gravitational field isn’t uniform – the force of gravity increases as you approach the black hole. So the crossover point is much closer to the black hole than that. I should work it out I suppose. But the firewall isn’t some thin skin around the event horizon. PS: Maybe Friedwardt Winterberg said something in his paper. I’ll check it out.

The image of the M87 galaxy black hole, together with the daily observed gamma ray bursts of black holes confirm the existence of the firewall phenomenon, and also that the Hawking radiation must be very small if it should exist at all.

Many thanks to the Physics Detective to bring physics back to sanity from the mathematical speculations of the 11 dimensional string/M theories with the 10^500 possibilities.

Many thanks Friedwardt. I couldn’t see anything in your paper that said where the gamma ray burst occurs. I’ll have to look into that some more. As for bringing physics back to sanity, I’m afraid to say that as I learn more from the old papers, I find myself feeling more and more critical about the “mathematical speculations”. I’m afraid to say I don’t think they’re limited to string theory or M theory.

in my paper I give as an example the gamma ray burst of mass equal to 50 solar masses with an energy mc^2 = 10^56 erg, radiated in about 10^-4 seconds, that is 10^60 erg/second, orders of magnitude larger than the largest observed supernova.

Dear John: My example is given on page 891, Z. Naturforsch. 56a, 889-892 (2001). It was in reference to an observed extreme gamma ray burst, outshining for a short moment all stars of the observable universe.

The gamma ray burst is released in the moment in-falling matter approaches and crosses the event horizon and disintegrates into gamma ray photons, see my paper page 891.

Noted Friedwardt. I think it happens before that myself, at a point when its ever-increasing infalling speed approaches and crosses the “coordinate” speed of light. I’ll have to work out where this is though.

The question where the gamma ray burst occurs is answered on page 891 where it makes twice reference to the word “event horizon. In general relativity the event horizon is positioned at the Schwarzschild radius were v = c equation 5.

Again noted Friedwardt. The Sun’s escape velocity is 617 Km/s. Imagine we turned the Sun into a black hole and dropped a brick into it from an “infinite” distance. When the brick is circa 700,000 kilometers from the black hole it’s falling at 617 km/s. It hasn’t got far to go to accelerate to say half of 299,792 km/s. So that gamma ray burst is going to be very close to the event horizon regardless of your preferred cause.

The firewall image for the black hole of M87, must appear to be larger than the Schwarzschild radius because by the heating up of the in-falling matter by the gamma rays emitted at the event horizon.

Another important fact is that the event horizon for an imploding mass appears first in its center as a point, as in Schwarzschild’s interior solution. It thus follows that a hollow black hole can form from the center moving radially outward transforming all matter into radiation, leaving behind a black spherical vacuum. If the imploding mass has a large angular momentum, then you will get something like the M 87 galaxy firewall, and the axial jets as seen to come the center of many galaxies. The sharp boundary of the dark M 87 black hole separating it from the luminous plasma around, is strong evidence for the reality of the firewall phenomenon.

Not mentioned in the press release of the M 87 black hole image is the absence of Hawking radiation but that no wormhole can be seen. With this observational fact, all the higher 11 dimensional string/M theory speculations have been falsified and with it about 60 thousand arXiv papers. Question: How long it will take for this failure to be admitted?

You really don’t know what you are talking about.

1. What would you expect the worm hole to “look like” in this context.

2. NONE of those theories predict that the typical blackhole will contain a wormhole, none of them.

Blademan, search the news for Pascal Koiran. Black hole wormholes are all over the media this week. I think I might write about that.

John Duffield,

“The reducing speed of light is transformed into your downward motion. The more it reduces the faster you fall. You fall towards the event horizon, faster and faster. All the while the speed of light is getting slower and slower. Falling bodies don’t stop accelerating, and they don’t slow down.”

I don’t see how the above can be true given your mechanism for gravity. You say that the electron is a photon in a closed path, right? So, the speed with which the electron falls cannot be larger than the vertical/downward component of this “internal” photon’s speed. So, when the speed of light goes to zero, the electron stops falling. It cannot move in any direction.

Andrei: I’m fairly sure the electron can’t make it to a place where the speed of light is zero. Imagine you have a gedanken ladder that extends down towards a black hole. You can stand on a rung of this ladder and drop an electron. It falls down towards the black hole, falling faster and faster as it passes each lower rung. In similar vein if you throw your electron downwards, its downward speed keeps increasing as it passes each lower rung. Stand on any rung, and this is always what happens. A gravitational field always makes objects fall down, faster and faster. It does this because it’s a place where the speed of light is “spatially variable”. The falling body falls faster and faster because the speed of light is getting slower and slower. And the point to note is that falling bodies don’t slow down. They always accelerate downwards. We have no evidence of falling bodies decelerating. But we do have evidence of gamma ray bursts. I think it’s clear enough that an electron gets to a place where so much potential energy, which is mass-energy, which is internal kinetic, has been converted into downward kinetic energy that it’s no longer stable. It loses integrity, and turns into one or more gamma photons and/or neutrinos. .

.

That’s right, the speed with which the electron falls cannot be larger than the vertical/downward component of this “internal” photon’s speed. The moot point is that I can show you a gamma ray burst, but you can’t show me a falling body that slows down. Like I said, falling bodies don’t slow down. They always accelerate downwards. This cannot continue without limit, because if it did the falling body would be falling faster than the local speed of light. So something else has got to happen. And if falling bodies don’t slow down, it’s got to be something else.

John Duffield,

I am not making the claim that we have evidence that falling bodies slow down, I am merely trying to see what the physical consequences of the gravitational mechanism you propose are.

The problem here is that we do not have a quantitative treatment on how the “internal” photon that makes the electron travels in a gravitational field. There are two effects here:

1. The photon’s path gets curved more and more as a result of the increasing gravitational field.

2. The photon moves slower and slower due to the same increasing gravitational field.

The first effect accelerates the photon towards the gravitational source, while the second slows it down. Depending on which effect is stronger we may have our electron accelerate or slow down.

But let’s just consider the following facts:

a. If you let an object fall towards a black hole it will reach a speed of, say 1000 m/s.

b. It exists a place, above the horizon where the speed of light is 900 m/s, and also 100 m/s, 10m/s, 1m/s, 0.1m/s and so on.

From a. and b. it follows that our object must slow down (at least from the point of view of a distant observer), right?

In regards to gamma-ray bursts, we have solid evidence that black holes can form and grow, as shown by the nice animation of stars orbiting our central black hole on your site. If all matter falling towards it would be converted to gamma rays a black hole could not become so large, right? In fact it should never form in the first place. But in your chapter on black holes say “So the frozen-star black hole grows like a hailstone.”. You cannot have matter being deposited near the horizon and the black hole grow if all matter is converted into gamma rays and sent back into space. So, I guess that what’s happening is that the objects accelerate up to a point and then slow down to a complete stop at the event horizon. Assuming your hypothesis is correct this point can only be determined by a detailed calculation.

One other point that needs to be worked out is that according to your hypothesis black holes should not orbit each other. This is a big problem because we have evidence of stars orbiting black holes, and according to Newton’s third law the stars should also exert a force on the black hole. It might be the case that, even if the type of wave we call electromagnetic dissapears at the horrizon, other waves continue to exist.

Andrei: the first effect accelerates the electron towards the gravitational source, while the second effect slows down the internal photon, or the electron spin if you prefer. As to whether the electron itself slows down, I just don’t see how it can. It comes back to the gedanken ladder scenario where I tried to convey the crucial point about falling bodies: they fall down, they don’t slow down. The latter position is not a dreadful position to take. That’s what Einstein essentially said in his 1939 paper On a Stationary System With Spherical Symmetry Consisting of Many Gravitating Masses. But that was before we knew about gamma-ray bursts. It was the detection of these by the US military that re-awakened interest in general relativity.

.

No, I don’t think it follows from a and b that our object must slow down. Light slows down, but not our object. Falling bodies fall down because light slows down. As regards black holes forming and growing, they can grow by “feeding” off gamma rays. It makes no difference to the black hole whether it grows by the accretion of a 511keV electron or a 511keV photon. The frozen-star black hole grows like a hailstone because light is deposited near the horizon. Please note that this is Friedwardt Winterberg’s hypothesis, not mine. He’s the guy who had the original idea for GPS.

.

Yes, according to what I’m saying, black holes should not orbit each other. Yes, it’s a big problem. Because a gravitational field is a place where’s there’s a gradient in the speed of light. So light refracts downwards, and matter falls down because of the wave nature of matter and because spin is real. So there is no mechanism by which a black hole can fall down. As for Newton’s third law, perhaps it doesn’t apply here because gravity is not really an action at a distance. It’s a local refraction. Don’t forget Newton’s 1692 letter to Richard Bentley: “That gravity should be innate inherent {essential} to matter so that one body may act upon another at a distance through a vacuum without the mediation of any thing else by through which their action or force {may} be conveyed from one to another is to me so great an absurdity that I believe no man who has in philosophical matters any competent faculty of thinking can ever fall into it”.

John Duffield,

” As to whether the electron itself slows down, I just don’t see how it can.”

You said:

1. Electron is light (a photon going in a closed path)

2. In a strong gravitational field light slows down.

From 1 and 2 it follows that an electron slows down.

Imagine the following scenario: An electron is accelerated at 0.99c and sent directly towards the surface of a neutron star. I am not using a black hole so that we do not get distracted by the gamma ray burst hypothesis. We know that matter can exist on the surface of a neutron star, it doesn’t get transformed in gamma rays. We also know that the speed of light at the surface is about 0.4c. So, you either accept that our electron slows down to 0.4c before touching the surface or you need to say that the electron can travel at twice the speed of light.

The point here is that the electron does accelerate relatively to the speed of light. So, if you start with 0.99c you could go to 0.999c when the electron hits the surface. It’s just that “c” at the surface is 0.4c at a large distance from the neutron star. So, its absolute speed decreases

Now, we did not see objects slowing down simply because we did not explore such a regime. The gravitational field of earth is very weak, and a pen falling down cannot accelerate anywhere near c. So, we don’t see pens slowing as they fall. But if you accelerate a pen to 0.99c towards a neutron star, it has to slow down to 0.4c before touching the surface.

“So there is no mechanism by which a black hole can fall down.”

Why are you dismissing the possibility that there are other types of waves of space, different than light, that could play the same role for the black holes? Maybe there is a sort of phase transition so that transverse waves such as light cannot propagate anymore, but the energy is simply converted to some other waves.

Andrei: I’m sorry to be slow replying. And I’m also sorry, but I just don’t accept from 1 and 2 it follows that an electron slows down. Gravitational fields make matter fall down faster and faster. They don’t make matter fall down slower and slower. Re the electron accelerated to 0.99c and sent towards a neutron star, I don’t accept that it will slow down to 0.4c, and nor do I accept that it can travel at more than twice the local speed of light. I say it breaks up instead, and that the result is a gamma ray burst.

.

No, we do not see objects slowing down. But we do see gamma ray bursts.

.

“So there is no mechanism by which a black hole can fall down”. That’s what I’m saying. Einstein said light curves because the speed of light is spatially variable. We have good evidence for the wave nature of matter, and I think we have good evidence that the electron is a “dynamical spinor”, wherein electron spin is real. So we can reason that the electron is like light in a closed path, and the electron falls down because the horizontal component is refracted downwards. This mechanism just isn’t there for a black hole.

.

I’m not dismissing the possibility that there are other types of waves of space. In fact, I think waves are more fundamental than fields. But I am saying the mechanism by which an electron falls down, or a brick, just isn’t there for a black hole. I don’t think you can rescue this by proposing some other wave in space for which we have no have no evidence. Especially when it would seem that gravitational waves propagate at the speed of light, which is zero in a black hole.

Interesting stuff John. I like how you describe gamma ray bursts, makes a lot of sense to me.

But isn’t there a problem?…

At the event horizon of a black hole the speed of light is zero. So light has stopped there. I like your thought experiment with capturing the photon inside a light box effectively increasing its mass. So if the light has stopped at the event horizon, and it is zero further towards the centre of the black hole, then there can be no energy in that region, and whatever is there (appreciating that it may be hole in space itself) cannot be contributing to the mass/energy of the black hole.

The only way out of this seems to be to understand that all the mass/energy of a black hole & the only cause of its gravitational field (effect on the inhomogeneity of the surrounding space), is the EM energy orbiting the black hole, and furthermore that a photon fired directly at a black hole will never actually cross the event horizon. Unless this is wrong (please do correct me), it would seem your hail stone analogy where you say the water droplet landing on the surface doesn’t ever go beyond the surface but does get buried is slightly misleading. Shouldn’t the water droplet never actually freeze, and never get buried, and the hailstone actually be hollow, with a hollow that increases in size as more droplets land on it?

Jonathan: don’t forget the way the hailstone grows. The water molecule alighting on the surface doesn’t pass through the surface. But because it gets surrounded and buried by other water molecules, the surface passes through it. I think it’s the same for a photon and the event horizon. The photon doesn’t pass through the event horizon, the event horizon passes through it. I think this hailstone analogy is apt because the black hole was originally called the frozen star. See introducing the black hole by Remo Ruffini and John Wheeler who said “in this sense the system is a frozen star”. So IMHO the black hole isn’t a hole in space, it’s more like “solid space”.

Hi John, yes I get your idea that ‘the photon doesn’t pass through the event horizon, the event horizon passes through it’, and I think this analogy is better than the mistaken idea that the photon (or even worse, the particle) travels through the event horizon to get subsumed into a mythical point singularity at the centre of the black hole….

However, you’ve possibly missed my point which is that because the speed of light is zero at and beyond the event horizon, there cannot exist any photons at and beyond the event horizon. A photon is a wave in space, a wave needs to wave, if a wave stops waving it ain’t a wave. And as per your thought experiment with increasing the mass of a mirror box by capturing photons in it, if the photons are static clearly there is no resistance to change in motion. So it seems obvious to me that there cannot be anything at or beyond the event horizon of a black hole that contributes to the mass of the black hole, so if black holes grow it can only be because of an increase in energy around the outside of the event horizon.

It is extremely interesting that wikipedia says Marcia Bartusiak traces the term ‘black hole’ back to Robert Dicke, and that this is the term that caught on. I suspect Dicke called it a ‘hole’ because the logical conclusion from looking at GR from a variable speed of light perspective is that it genuinely is a hole in space, i.e. nothing can go there. It is as fanciful a region as a parallel universe.

When one entertains the idea that a black hole genuinely is an inaccessible hole is space, it seems obvious that this is the reason why there is a gravitational ‘field’ around the black hole, i.e. because the space that was in the of centre of a black hole is now pushed into the surrounding space (making it ‘thicker’). Going back to you analogy of injecting more jelly into a block of gin-clear elastic jelly to create a pressure gradient… it’s not so much that one is injecting more jelly, but that one is injecting a bubble.

And for me at least, the natural step of thought is that ‘bubbles’ in space are the *sole* reason for ‘pressure gradients’ (gravitational fields) in space. i.e. the space within the double loop trivial knot that forms the electron is also (in some way) a very tiny bubble in space.

It seems that Einstein was also interested in this idea:

https://en.wikipedia.org/wiki/Black_hole_electron

“A paper published in 1938 by Albert Einstein, Leopold Infeld and Banesh Hoffmann showed that if elementary particles are treated as singularities in spacetime, it is unnecessary to postulate geodesic motion as part of general relativity.”

Thoughts?

Jonathan, sorry to be slow replying. I’ve got house guests.

“>

“>

.

However, you’ve possibly missed my point which is that because the speed of light is zero at and beyond the event horizon, there cannot exist any photons at and beyond the event horizon. A photon is a wave in space, a wave needs to wave, if a wave stops waving it ain’t a wave.

.

True, but that photon is also a “pulse” of four-potential. The sinusoidal electric wave is the spatial derivative of this potential, and the orthogonal sinusoidal magnetic wave is the time derivative. And like I was saying in what energy is I think of it as a pressure-pulse of space propagating through space. Because I also think of a gravitational field as “a pressure gradient in space”. If you had a big star A, and a little star B, plus a line of photons going from A to B, then star A would shrink along with its pressure gradient in space, whilst the opposite would happen to B.

.

And as per your thought experiment with increasing the mass of a mirror box by capturing photons in it, if the photons are static clearly there is no resistance to change in motion.

.

Maybe. I’m sure there’s no mechanism for gravitational attraction inside a black hole. It wouldn’t fall down like an electron does, or a pencil. I also imagine a black hole is something that you couldn’t push or pull in the usual sense. As such there would be a difference between its active gravitational mass and its inertial mass. I haven’t written about this, but I wonder if a black is something that would not be slowed down if it hit the Earth. It would go straight on through, like an unstoppable bullet, leaving a hole like the Silver Surfer:

.

.

So it seems obvious to me that there cannot be anything at or beyond the event horizon of a black hole that contributes to the mass of the black hole, so if black holes grow it can only be because of an increase in energy around the outside of the event horizon.

.

We’ll have to agree to disagree on this Jonathan. It stems from our different view of energy. I note your other comment on what energy is.

.

It is extremely interesting that wikipedia says Marcia Bartusiak traces the term ‘black hole’ back to Robert Dicke, and that this is the term that caught on. I suspect Dicke called it a ‘hole’ because the logical conclusion from looking at GR from a variable speed of light perspective is that it genuinely is a hole in space, i.e. nothing can go there. It is as fanciful a region as a parallel universe.

.

That’s fair in enough, because it is a place where you can’t go. Note how in the black holes article I said “whilst it looks like a hole in space, the frozen-star black hole is like solid space too”. An iceberg is a place where a fish can’t go, but that doesn’t make it a hole in the sea.

.

When one entertains the idea that a black hole genuinely is an inaccessible hole is space, it seems obvious that this is the reason why there is a gravitational ‘field’ around the black hole, i.e. because the space that was in the of centre of a black hole is now pushed into the surrounding space (making it ‘thicker’). Going back to you analogy of injecting more jelly into a block of gin-clear elastic jelly to create a pressure gradient… it’s not so much that one is injecting more jelly, but that one is injecting a bubble.

.

The thing is that your bubble contains air, and that air has a pressure which pushes the jelly outwards. Here’s how I think: I am the physics detective, and I have a toolbox. But when I open my toolbox, the only thing that’s in there is… space.

.

And for me at least, the natural step of thought is that ‘bubbles’ in space are the *sole* reason for ‘pressure gradients’ (gravitational fields) in space. i.e. the space within the double loop trivial knot that forms the electron is also (in some way) a very tiny bubble in space. It seems that Einstein was also interested in this idea: https://en.wikipedia.org/wiki/Black_hole_electron “A paper published in 1938 by Albert Einstein, Leopold Infeld and Banesh Hoffmann showed that if elementary particles are treated as singularities in spacetime, it is unnecessary to postulate geodesic motion as part of general relativity”. Thoughts?

.

The paper is accessible here: https://sci-hub.do/10.2307/1968714. My thoughts are Why didn’t Einstein use Born-Infeld theory? See https://royalsocietypublishing.org/doi/pdf/10.1098/rspa.1935.0093. Page 12 talks about the inner angular moment of the electron. I don’t understand why Einstein contributed very little to physics in his later years. Or why he put his name to a rambling mathematical paper that tried to model the motion of the electron with no underlying electron model, even though Infeld had one. I sometimes wonder if Einstein had a stroke or something. It’s as if he somehow lost his vision, his passionate curiosity, his mojo. I’ve tried researching this, but I’ve come up empty-handed.

Your hypothesis is interesting. Let me know if I understood correctly:

An electron traveling at 0.99c for an observer distant from the neutron star will convert into pure energy (=a photon) before reaching the surface of the neutron star, where the speed of light is 0.4c relative to the same observer, more precisely it will do so at some point where the speed of light slows down to 0.99 times its original value. From there you won’t see any electron anymore, and the acceleration paradox disappears. The star can still capture material moving slow enough with respect to the speed of light at its surface.

I do have some questions though:

1) about the conservation of charge for the electron: are you implying conservation of charge is not a thing at some point in the gravitational field?

2) it’s my understanding that you (or rather, Einstein) do not ascribe the slowing of light to interactions with the medium, like it happens in water. So it’s not REALLY a refraction in the usual sense. What is actually causing light to slow down?

3) if we assume a linear density gradient inside a star, a black hole can’t form from the inside out like a hailstone, because at some point below the surface of the star gravity will actually start decreasing. The gravitational field will be strongest at some point below the surface before the center, and that’s where the black hole will form the event horizon when density becomes high enough. What happens next? Does the event horizon grow both outwards and inwards to the center? Are black holes hollow, if that even means anything?

This isn’t my hypothesis Leon. It’s Friedwardt Winterberg. What a shame it wasn’t Einstein’s.

.

An electron traveling at 0.99c for an observer distant from the neutron star will convert into pure energy (=a photon)

.

I don’t think it can convert into a single photon due to conservation of angular momentum. I think it will convert into a combination of photons and neutrinos departing in different directions. For an analogy, think in terms of a flywheel breaking up.

.

before reaching the surface of the neutron star, where the speed of light is 0.4c relative to the same observer, more precisely it will do so at some point where the speed of light slows down to 0.99 times its original value.

.

I’m not sure of the exact point where the breakup would appear. I’ve never talked about this in a neutron star context, only a black hole context.

.

From there you won’t see any electron anymore, and the acceleration paradox disappears. The star can still capture material moving slow enough with respect to the speed of light at its surface.

.

What acceleration paradox? Are you talking about a body falling faster and faster, such that if it was already moving towards the gravitating body at 0.99c, it would have to end up falling faster than light?

.

1) about the conservation of charge for the electron: are you implying conservation of charge is not a thing at some point in the gravitational field?

.

Yes. I’m saying conservation of charge is not absolute. It’s a law that can be broken.

.

2) it’s my understanding that you (or rather, Einstein) do not ascribe the slowing of light to interactions with the medium, like it happens in water. So it’s not REALLY a refraction in the usual sense. What is actually causing light to slow down?

.

Refraction is the result of light slowing down. Whether this is caused by the nature of space changing, or by interactions with atoms, it’s still refraction. The former is however arguably “purer” than the latter. As a result it isn’t wavelength dependent. We do not see rainbows around gravitational lenses.

.

3) if we assume a linear density gradient inside a star, a black hole can’t form from the inside out like a hailstone, because at some point below the surface of the star gravity will actually start decreasing.

.

You need to think about a collapsing star. You have a lot of falling matter making the centre denser and denser. Whilst there’s no gravity at the centre, it keeps getting denser and denser. At some point the energy-density gets so high that the speed of light goes to zero at that location. But the falling matter keeps on coming, so this central region gets bigger.

.

The gravitational field will be strongest at some point below the surface before the center, and that’s where the black hole will form the event horizon when density becomes high enough. What happens next? Does the event horizon grow both outwards and inwards to the center? Are black holes hollow, if that even means anything?

.

It’s the gravitational potential that counts. The gravitational field is the gradient in potential. The black hole forms where the potential is lowest, not where the gradient is steepest.

.

PS: Sorry to be slow replying. I’ve had a lot on recently.

Thanks for the reply. Don’t worry about being slow, it’s me that is constantly bombarding you with question, it’s just that I’m very passionate on the topic. Yes, that’s exactly what I meant by acceleration paradox.

This is going to be a long reply, please bear with me. I’ll start with a direct question:

Even in the case of black holes, when would you think the decomposition in photons/neutrinos would happen?

Now, on to my own thoughts.

1) I think we’re forgetting a few important points, namely time dilation and length contraction; for an external observer objects falling into the black hole will appear to slow down and contract due to the different clock speed (and so different meter). I can understand an inertial observer will instead see nothing strange, up until he touches the event horizon (which will take an infinite amount of time even from his point of view) where time just stops. Yes, objects always accelerate in a gravitational field and fall faster and faster, but the rate of change of the acceleration becomes slower and slower as to never exceed the local speed of light, for ANY observer. Length contraction will then give the illusion that the object actually slows down.

On a hypothetical ladder to the event horizon (with infinite steps), each step determines a frame of reference, each with its own speed of light as determined from the first step of the ladder at infinite distance.

So for an electron going at 0.9 c from the very first step, at the second step it will appear to accelerate to 0.96c, at the third step to 0.99c, the fourth to 0.999c, and so on.

If we look at the same electron from the second step instead, at the first step the electron will actually appear to travel slower than 0.9c, due to time dilation. It’s important to remember that it’s only the relative motion between objects that matters, so this scenario is equivalent to saying the observer on the second step is moving at 0.9c towards the electron before it accelerates. This means the movement of the electron is time dilated. So, at first it is say 0.8c, then it accelerates towards the second step to 0.9c, and then down as before.

2) After thinking about it more carefully, I don’t think the object will ever reach the point of breaking in any frame of reference. Someone on the ladder to the event horizon will perceive objects above him accelerate way slower than at eye Level, similar to how the weight of objects is lower at the top of mount Everest (because they experience less acceleration) with respect to the surface of earth. Similarly, they will perceive objects below them accelerating faster and faster, until they hit relativistic speed where time dilation and length contraction will not allow the object to cross the speed of light at that step of the ladder. This is perfectly consistent at any step before the event horizon, and the ladder has infinite steps to get there.

So the object never really goes fast enough to reach the speed of light, locally, at any step. Hence, the electron never breaks down.

3) I believe the only effect able to do so at this point would be tidal forces so strong with respect to the effects of time dilation and length contraction as to literally mess up with the wave propagating around and around in a closed loop (i.e. spaghettification). This translates to a point in space where the rate of change of the gradient of the speed of light is faster than the rate at which light propagates (“revolves”) inside an electron, so light has no option but to travel in a straight line.

4) But then we go back to the problem of how the radiation actually escapes, since the gradient can only increase TOWARDS the event horizon; the photon would be forced to travel only in that direction.

The electron is a bispinor though, so I can imagine that depending on the orientation of the electron during the fall only one of the two weyl representations (corresponding to a photon rotation and a neutrino rotation) actually needs to curve downwards, like a sonar, for the electron to be able to fall (since both neutrinos and light propagate at the same speed, i don’t see why neutrinos would not be affected in the same way by the gravitational field). This means that on average, half the time it’s the neutrino destined to reach for the event horizon, and radiation is free to escape in any orthogonal direction. Of course if the spin is perfectly aligned with the gravitational field, which we will assume perfectly spherical, the weyl representation escaping orthogonally would be forced to orbit the black hole forever, but any deviation from this alignment would determine an escape route.

I haven’t forgotten you Leon. I will reply tomorrow.

Even in the case of black holes, when would you think the decomposition in photons/neutrinos would happen?

.

I’m sorry Leon, I just don’t know. But my gut feel is that it happens way before the electron gets anywhere near the event horizon.

.

1) I think we’re forgetting a few important points, namely time dilation and length contraction; for an external observer objects falling into the black hole will appear to slow down and contract due to the different clock speed (and so different meter). I can understand an inertial observer will instead see nothing strange, up until he touches the event horizon…

.

It’s a fairy tale, Leon. When did you ever see a falling object slow down? And gamma ray bursts are real, they don’t happen for nothing. The falling observer will see one. He will BE one.

.

(which will take an infinite amount of time even from his point of view) where time just stops.

.

That’s what they say. But time is really just a cumulative measure of motion. And falling bodies do not stop falling. They don’t slow down and stop.

.

Yes, objects always accelerate in a gravitational field and fall faster and faster, but the rate of change of the acceleration becomes slower and slower as to never exceed the local speed of light, for ANY observer. Length contraction will then give the illusion that the object actually slows down.

.

No, the acceleration increases in line with the increasing gravitational field strength. That field strength is a measure of the local gradient in the coordinate speed of light. Something has got to give Leon.

.

On a hypothetical ladder to the event horizon (with infinite steps), each step determines a frame of reference, each with its own speed of light as determined from the first step of the ladder at infinite distance.

.

A frame of reference isn’t something real. Nor is your ladder. The reducing speed of light is real. That’s why the falling body falls down. People will tell you a body falling into a black hole ends up falling at the speed of light. But at the event horizon, the speed of light is zero. And since falling bodies don’t slow down, Houston you have a problem.

.

So for an electron going at 0.9 c from the very first step, at the second step it will appear to accelerate to 0.96c, at the third step to 0.99c, the fourth to 0.999c, and so on. If we look at the same electron from the second step instead, at the first step the electron will actually appear to travel slower than 0.9c, due to time dilation. It’s important to remember that it’s only the relative motion between objects that matters, so this scenario is equivalent to saying the observer on the second step is moving at 0.9c towards the electron before it accelerates. This means the movement of the electron is time dilated. So, at first it is say 0.8c, then it accelerates towards the second step to 0.9c, and then down as before.

.

No Leon. Time dilation only occurs because the local speed of light is less than some other local speed of light. It happens in the SR context because macroscopic motion through space reduces the local motion of the electron, because the total motion is c. Think of the wave nature of matter, then think of the wave in a closed circular path, then think of stretching out a helical spring. One turn round the path takes longer because the path is now helical instead of circular. In the GR context the local speed of light is less than some other local speed of light because light goes slower when it’s lower. That’s what causes the electron to fall down. So again, Houston you have a problem.

.

2) After thinking about it more carefully, I don’t think the object will ever reach the point of breaking in any frame of reference. Someone on the ladder to the event horizon will perceive objects above him accelerate way slower than at eye Level, similar to how the weight of objects is lower at the top of mount Everest (because they experience less acceleration) with respect to the surface of earth. Similarly, they will perceive objects below them accelerating faster and faster, until they hit relativistic speed where time dilation and length contraction will not allow the object to cross the speed of light at that step of the ladder. This is perfectly consistent at any step before the event horizon, and the ladder has infinite steps to get there.

.

You’re going to have to think again Leon, because that electron is falling faster and faster. Observer on the lower rungs of your ladder are time dilated, so as far as they’re concerned, that electron is falling faster.

.

So the object never really goes fast enough to reach the speed of light, locally, at any step. Hence, the electron never breaks down.

.

I would urge you to read Winterberg’s paper. And How Gravity Works. Something has got to give, Leon. As to why Einstein didn’t predict gamma ray bursts I do not know. It seems to me that lost his mojo after about 1920.

.

3) I believe the only effect able to do so at this point would be tidal forces so strong with respect to the effects of time dilation and length contraction as to literally mess up with the wave propagating around and around in a closed loop (i.e. spaghettification). This translates to a point in space where the rate of change of the gradient of the speed of light is faster than the rate at which light propagates (“revolves”) inside an electron, so light has no option but to travel in a straight line.

,

Then it wouldn’t be an electron, it would be a photon.

.

4) But then we go back to the problem of how the radiation actually escapes, since the gradient can only increase TOWARDS the event horizon; the photon would be forced to travel only in that direction.

The electron is a bispinor though, so I can imagine that depending on the orientation of the electron during the fall only one of the two weyl representations (corresponding to a photon rotation and a neutrino rotation) actually needs to curve downwards, like a sonar, for the electron to be able to fall (since both neutrinos and light propagate at the same speed, i don’t see why neutrinos would not be affected in the same way by the gravitational field). This means that on average, half the time it’s the neutrino destined to reach for the event horizon, and radiation is free to escape in any orthogonal direction. Of course if the spin is perfectly aligned with the gravitational field, which we will assume perfectly spherical, the weyl representation escaping orthogonally would be forced to orbit the black hole forever, but any deviation from this alignment would determine an escape route.

.

I don’t know the answer to that. But I would imagine the break-up occurs way before the photon sphere, and neither photons nor neutrinos would end up orbiting the black hole.

Very nice article you make me see the world in a way no one else ever has yes, car insurance is required for drivers in almost every state. It is not a requirement in New Hampshire for drivers to buy car insurance, but drivers there do need to show proof that they can afford to pay the cost of an accident if it’s their fault. Most drivers have car insurance because it is the law, but that doesn’t mean you should only buy the minimum required coverage. Car insurance may be able to help you pay for medical expenses that health insurance normally won’t cover.

For more detail visit our link.

https://fukatsoft.com/b/basic-things-you-need-to-know-about-car-insurance-quote/

Ha, ha, ha,……. it was bound to happen sooner or later John !

It got past the spam filter, Greg. That’s because it doesn’t contain any words like [the name of those little blue pills men supposedly want to buy]. Oooh, there’s only 3 emails in the spam folder today. When I looked the other day there were 20. Hang on a minute: Empty spam. And then there were none! I shall neutralise the spam comment above at some point, but I’ll leave it there for now for context.

I feel your pain John, I get dozens of boner pill spam and others of simular ilk per week myself,LOL ! How dare they assume my 65 year old tired ass needs “enhancement “.

LOL! We are getting into too much information territory here Greg! This is a family website! LOL!

Talk about Fibonacci numbers. really fascinating, also torus universe as a way to sort of unite some of the issues in the explainable universe, einstein’s cosmological constant intrigues me… could it be different in connection to higher dimensions; if you believe in higher dimensions/lower dimensions (how loss of dimension occurs with loss of rotational energy inside black hole) I like to think about the universe as intelligent, able to create itself and its own consciousness, and constantly in feedback and communication to maintain homeostasis against all the odds — always hard when there are things with their own agendas influencing the path of the universe at the same time with little knowledge of the rest of the world

Some links to cool projects and ideas:

http://hi.gher.space/forum/viewtopic.php?f=24&t=2414#p26837

https://www.researchgate.net/publication/326972894_Processes_of_Science_and_Art_Modeled_as_a_Holoflux_of_Information_Using_Toroidal_Geometry

https://www.researchgate.net/publication/341314308_Consciousness_in_the_Universe_is_Tuned_by_a_Musical_Master_Code_Part_3_A_Hydrodynamic_Superfluid_Quantum_Space_Guides_a_Conformal_Mental_Attribute_of_Reality

I take a much more “plain vanilla” view, Jude. Whilst I’m forever quoting Einstein and am something of an Einstein fan, I’m not a fan of his 1917 “outlandish” idea of a closed curved universe. I think the universe is flat, and since it’s expanding, I also think it’s finite. See what you make of this: https://physicsdetective.com/the-edge-of-the-universe/ . As for universal consciousness, I have some sympathy with Pantheism, but I’m not a fan of religion, either. Sorry.

The problem here is that you’re theory violates a bunch of conservation laws.

The electron turning into gamma rays violates B-L conservation AND conservation of charge.

If a black hole isn’t affected by the gravity of other objects then

1. This leaves no explanation for the LIGO observations.